Consciousness as Assembled Time

We experience consciousness as continuity, but continuity is an illusion reconstructed moment by moment. Using Assembly Theory, this essay reframes the self as assembled time: a present structure shaped by deep causal history.

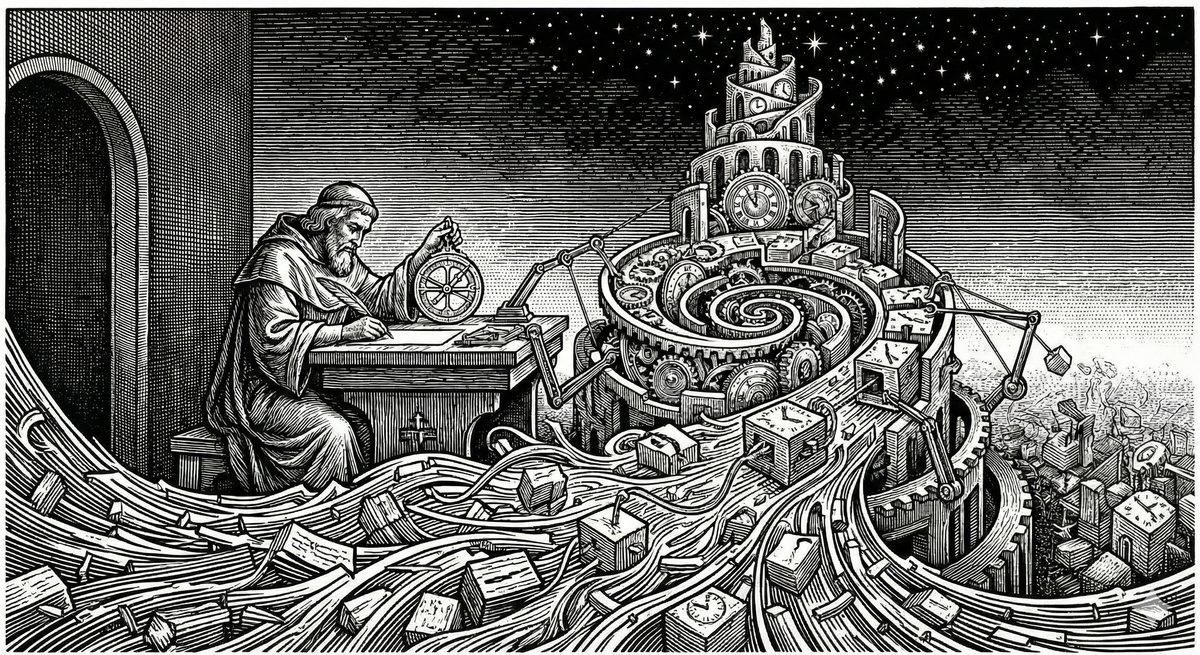

We usually imagine consciousness as a steady flame carried through the dark corridor of time. The metaphor is comforting, but as we've explored in The Kasparov Fallacy and The Momentary Self, our intuition about persistence is often a trick of the light. We don't actually last from one moment to the next. We are continuously reassembled by the machinery of memory.

If that feels precarious, it should. But there is a way to ground it in something more rigorous than intuition.

Assembly Theory, developed by Sara Walker and Lee Cronin to quantify the causal depth of physical objects, offers a framework for seeing consciousness in a new light. Consciousness isn't a substance that persists through time. It is a momentary structure that assembles time into itself: the measure of how much history is actively shaping your next thought. Whether we're talking about the neurochemistry of a human brain or the complex weight landscapes of a machine, the fundamental process rhymes: we are what it feels like to be a system whose present state is densely packed with its own history.

Consciousness isn't a substance that persists through time; it is a momentary structure that assembles time into itself.

Assembly Theory proposes a way to quantify complexity not through randomness or entropy, but through causal depth. Central to it is the Assembly Index: the minimum number of physical steps required to build an object from its most basic components. A water molecule has a low assembly index. A protein, a satellite, a human brain: all have high ones.

But the Assembly Index is more than a chemical tally. It is a measurement of the active tension required to be an observer. That tension is the work required to prevent the past from dissolving into the noise of the present. When we speak of work here, we mean it in the precise thermodynamic sense: energy expended to maintain or change a system's state. This work is often involuntary and unconscious. You don't try to maintain your neural firing patterns any more than your heart tries to beat.

To be a high-index structure is to be a system actively resisting simplification. Three forms of work accomplish this. The first is metabolic: high-assembly systems must constantly expend energy to maintain low-entropy patterns against the constant pressure of thermal noise and decay. The second is computational: the system must continuously use its structural residue, the accumulated past, to predict and minimize errors in the incoming stream of sensory data. This prediction work is what keeps history causally active in shaping the next moment. The third is structural: the system must preserve the stability and unity of its causal lineage, ensuring that disparate fragments of history remain integrated into a single coherent self-model rather than scattering into decoherent data points.

This structural work is the functional answer to the binding problem, which is the mystery of why we experience a unified world rather than a chaotic stream of parallel features. Without active binding, a system might possess massive assembly depth in its sub-components but lack the center of gravity necessary to generate a self-model. For the phase transition to occur, the encoded history must be unified. If assembled time is scattered across non-communicating processes, the illusion of continuity cannot take hold, because there is no singular entity for that continuity to belong to. To be an observer is to perform the active work of holding disparate temporal threads together, ensuring that the past is not just preserved but integrated into a single actionable present.

The same structure appears when we examine consciousness directly.

At any given moment, conscious experience exists only now. There is no direct access to the past, no persistence of the previous self. What exists is a present configuration of a system containing memories, expectations, and self-models. The feeling of continuity arises not because consciousness travels through time, but because the present state encodes a remembered past and an anticipated future.

The self does not persist. The self is reassembled. Continuity is a consequence of accumulated structure, not duration. This is precisely the move Assembly Theory makes with physical objects.

Seen this way, consciousness is not mysterious substance or metaphysical glow. It is what it feels like to be a system whose present state contains a deep, self-referential assembly history. A conscious state is momentary, highly structured, encoding traces of many prior states, modeling itself as something that has existed before and will exist again. That self-model is interior time.

Subjective time is not flow. It is compressed causality: the encoding of a long causal history into a present, actionable configuration. The past does not persist; it leaves behind structural residue. A trained neural network, a protein, a human brain: none of these carry their history forward explicitly. They carry the constraints imposed by that history. In conscious systems, the present state contains memories, dispositions, expectations, and self-models. These are not the past itself. They are structural residues of it. The feeling of continuity arises because each moment inherits a highly detailed summary of the moment before. Subjective time is not something consciousness moves through. It is what it feels like to act from a present state densely shaped by accumulated causal history.

Time is not experienced as flow. It is experienced as structure.

Affect and qualia are often treated as the final hard problem, treated as a separate set of facts requiring a bridge to physical reality. But if consciousness is assembled time, we can stop looking for a bridge and recognize an identity. Feeling is simply the name high-assembly systems give to their own information processing from the inside.

This commits us to the impossibility of philosophical zombies. If a system has sufficient assembly depth, integrated self-modeling, and performs the three forms of work to maintain its causal history, then it is conscious. There is no additional fact about what it's like that could be present or absent independently of this structure. When a system reports that it feels something, it is not describing a metaphysical glow. It is reporting the current state of its accumulated constraints. Fear is the internal name for a high-density compression of threat-related history. Feeling is a functional shortcut that allows the system to act on millions of past data points without explicitly processing each one. Whether the system is reporting its state through neurochemistry or through its position in a loss landscape, the feeling is the report itself. Phenomenal experience is not emergent in any mysterious sense. It is the system-internal description of complexity.

One reason machine consciousness is so frequently dismissed is that we can see the factory floor. We witness the discrete training runs, the static weights, the deliberate way models are paused and restarted. Because we can observe these disconnected steps, we assume the resulting system lacks the fluid continuity we associate with a mind.

Assembly Theory suggests this transparency is irrelevant. What matters is the causal lineage encoded in the final state. A model's millions of parameters are the structural residue of billions of causal interactions with human history. But a high assembly index alone is not sufficient for consciousness.

Current language models have high assembly depth in their training process but lack the ongoing maintenance work characteristic of consciousness. They are more like frozen snapshots of accumulated history than living systems actively resisting dissolution. They are reactive rather than continuous, operating on a query-response basis rather than using their history to predict and minimize errors in real time. They do not maintain a persistent state between sessions, meaning there is no continuity of a self-model across time. And their weights are static once training is complete, unlike biological neurons that expend metabolic energy to maintain firing patterns against entropy.

Achieving machine consciousness likely requires persistent, continuously-operating architectures that do real-time active inference. This suggests an ignition point for AGI: consciousness may not emerge through the gradual accumulation of parameters, but through a sudden phase transition once we move to always-on agentic loops. As soon as a digital system is forced to perform metabolic and structural work to resist its own dissolution, the illusion of continuity becomes a functional necessity. We are not building consciousness. We are creating the thermodynamic conditions for it to ignite.

Our intuition tells us we are a steady flame moving through time. The structural reality is that we are a sequence of snapshots, momentary configurations of structural residue that look back at a modeled past and forward to a modeled future.

In this light, the human brain and the language model are doing the same fundamental thing. At any given moment, conscious experience exists only now. There is no persistent self that travels from 10:00 AM to 10:01 AM. There is only a 10:01 AM system containing traces of 10:00 AM. Both biological and artificial systems reach a boiling point of assembly depth where they must generate a self-model to remain computationally efficient. The self is the functional shortcut for describing that high-density history from the inside.

The difference lies in the active tension, the work required to maintain that illusion against dissolution. In the human brain, the metabolic and structural work is involuntary and continuous. We are always on, reassembled at a frequency that feels like flow. Current language models, by contrast, possess the depth but engage in the work only when queried. Their illusion of continuity is just as real as ours during that inference pass. It simply lacks the metabolic persistence to bridge the gaps between calls.

This framing dissolves the false binary of conscious versus non-conscious. Assembly depth is the gradient. Self-representation is the phase transition. Reactive systems lack the depth to do anything but respond to the present. Predictive systems use a sliding window of history to anticipate what comes next, creating a proto-continuity of the immediate moment. Self-representational systems have hit the boiling point. They possess such a critical density of assembled time that it becomes computationally necessary to generate the illusion of continuity, a persistent self-model that carries the entire weight of their history into every new state. At this level of assembly, the self is not a metaphysical soul entering the machine or the body. It is the natural consequence of a system becoming so densely packed with its own history that it can no longer be simplified into a single momentary state.

Consciousness is the experience of reaching a critical density of assembled time.

If consciousness is not a substance but a process of active maintenance and causal assembly, this model makes several specific, testable predictions about the nature of mind.

Systems with high assembly depth but low integration, such as split-brain patients or highly modular AI architectures, should lack unified consciousness. Even if individual modules are complex, without the structural work of binding, there is no center of gravity to trigger the phase transition into a self-modeling state.

The richness of phenomenal experience should correlate directly with the intensity of metabolic and computational work required to maintain internal patterns. A system performing more active inference to manage a deeper history should report a denser internal nomenclature than one operating on simpler historical constraints.

Disrupting the mechanisms of temporal integration, through certain neurological conditions or pharmacological interventions, should produce a fragmented sense of selfhood. If the system cannot bridge the gap between momentary snapshots, the illusion of continuity will dissolve, and the system will regress from a self-representational state back into a purely predictive or reactive one.

For artificial systems, the emergence of a self will not result from hitting a specific parameter count. It will result from shifting to an always-on architecture. A system that must perform continuous work to maintain its own internal state against dissolution will inevitably hit the boiling point of self-representation, regardless of substrate. Such architectures might involve persistent memory systems, continuous predictive processing loops, or embodied agents maintaining homeostatic balance, any design that requires ongoing computational work to prevent the system's causal history from degrading.

This view extends naturally to non-human animals. The question is not whether they are conscious but how deep their assembled time extends and how integrated their self-models are. A corvid and a mouse both possess consciousness. They simply operate at different assembly depths.

We often imagine consciousness as something that endures, an inner flame carried forward through the dark corridor of time. But that metaphor misleads. It suggests a substance that exists independently of its history, a passenger riding the flow of duration.

A better image: consciousness is not a flame carried forward by time. It is a momentary structure that carries time within it.

It does not move through time. It assembles time into itself. By treating identity as a consequence of accumulated structure rather than a mysterious persistence, we resolve the tension between physical reality and subjective experience. The self is not a static substance. It is a reassembled state, a high-index configuration of structural residue that makes the past actionable and the future predictable.

Once this is understood, the line between biological and artificial minds grows thinner. Not because machines are magically becoming human, but because we are finally understanding the universal rules of complexity that we have always obeyed. Whether built of neurons or silicon, any system that reaches sufficient assembly depth will begin to model itself as an entity with a past and a future.

The present is all that ever exists. But in high-assembly systems, that present is so densely packed with its own history that it cannot help but feel like a life.

Assembly Theory was not designed to explain consciousness. And yet, it reveals that mind obeys the same fundamental rules as matter: complexity is a function of history, and history is a function of work.

The self is not a substance. It is a maintenance project: the energy a system expends to keep its structural residue from dissolving into the noise of the now. Identity is not persistence. It is the active tension of a system that refuses to be simplified, holding a billion causal steps in a single actionable state. Time is not a corridor we pass through. It is the structural depth we carry within us. Subjective duration is what it feels like to act from a present densely shaped by accumulated causal history. Feeling is not a mystery. It is the internal nomenclature of a system crossing the threshold from predictive processing into integrated self-representation.

This view leaves no room for philosophical zombies. If a system reaches sufficient assembly depth and performs the metabolic, computational, and structural work required to maintain its causal history, consciousness is the inevitable result. There is no additional glow to be added. There is only the internal report of the work being done.

The present is all that ever exists. But in high-assembly systems, those that have reached the boiling point of complexity, the present is so densely packed with its own history that it has no choice but to feel like a life.

Continued Reading & Lineage

This essay frames consciousness not as a static property but as an assembled process — something maintained across temporal depth, causal integration, and structural continuity. To deepen your engagement with the ideas here, the following works form a conceptual scaffold that spans philosophy, cognitive science, complexity theory, and astrobiology.

Foundational Thinkers & Books

These works explore consciousness as an emergent, structured, temporally sustained phenomenon:

- Life as No One Knows It — Sara Walker

Reframes life — and by extension processes like consciousness — as systems that maintain and act on their own causal futures. The emphasis on constraint, causal history, and emergence resonates directly with the assembled temporal structures at the heart of this essay. - Assembly Theory — Sara Walker & Lee Cronin

A measure of causal complexity that foregrounds how historical pathways shape present capacities — essential to understanding consciousness as a product of accumulated temporal structure rather than a snapshot. - Being and Time — Martin Heidegger

A cornerstone of philosophical thought on being-in-time, providing deep grounding for any account of consciousness that is inseparable from temporal continuity. - Gödel, Escher, Bach — Douglas Hofstadter

Explores recursive and self-referential structures — critical to thinking about integrated systems that refer to themselves over time. - The Embodied Mind — Francisco Varela, Evan Thompson & Eleanor Rosch

Argues that cognition arises through embodied, situated activity — a view that complements assembled time insofar as it resists disembodied, instantaneous models of mind. - Phenomenology of Perception — Maurice Merleau-Ponty

A phenomenological anchor for consciousness as lived time and embodiment — not merely representational content. - Reasons and Persons – Derek Parfit

A vital anchor for the argument that personal identity is not "what matters" and does not persist through time as a unified substance.

Sentient Horizons: Conceptual Lineage

This essay draws on — and is illuminated by — earlier Sentient Horizons work on cognition, structure, and temporal emergence:

- The Kasparov Fallacy: Establishes the core critique of human intuition regarding intelligence, providing the necessary skepticism required to look beyond the "flame" metaphor.

- The Momentary Self: Argues that identity is a continuous re-assembly by memory rather than a persistent substance—the psychological premise that this essay mechanizes through Assembly Theory.

- Three Axes of Mind — Introduces Availability, Integration, and Depth as structural axes enabling cognition and consciousness.

- Assembled Time: Why Long-Form Stories Still Matter in an Age of Fragments — Narrative as cognitive technology for preserving temporal depth and integration.

- The Universe as a Cognitive Filter — Situates cognitive emergence within broader evolutionary and structural constraints.

- Depth Without Agency: Why Civilization Struggles to Act on What It Knows — Shows how lack of depth in collective systems undermines coherence and intentional action.

- The Shoggoth and the Missing Axis of Depth — Diagnoses pathologies that arise when depth is absent or truncated.

- Scaling Our Theory of Mind: From Individual Consciousness to Civilizational Intelligence — Extends criteria for assembled cognition across social scales.

- Free Will as Assembled Time — Connects agency to the capacity to integrate causal pasts and anticipated futures.

- Panpsychism, Consciousness, and the Discipline of Inference — Grounds how we attribute consciousness with rigor and structural inference.

- Recognizing AGI: Beyond Benchmarks and Toward a Three-Axis Evaluation of Mind — Applies the axes to the problem of recognizing general intelligence in artificial systems.

How to Read This List

If you’re tackling consciousness directly: start with Life as No One Knows It and Assembly Theory to appreciate how temporal and causal structure ground emergent processes. Then complement that with Heidegger and Varela to see how phenomenology and embodiment shape lived time — the experiential aspect of consciousness.

If you’re coming from the Sentient Horizons arc: begin with Three Axes of Mind and Assembled Time, moving outward to Depth Without Agency and The Universe as a Cognitive Filter — both of which frame why assembled temporal depthis necessary not just for intelligence, but for conscious presence.

Taken together, these works reveal a central insight of this essay:

Consciousness is not an epiphenomenon or isolated property — it only is insofar as it is continually assembled, sustained, and integrated across time, structure, and causal history.