Everything Is Amazing and Nobody's Happy – Wonder as Calibration Practice

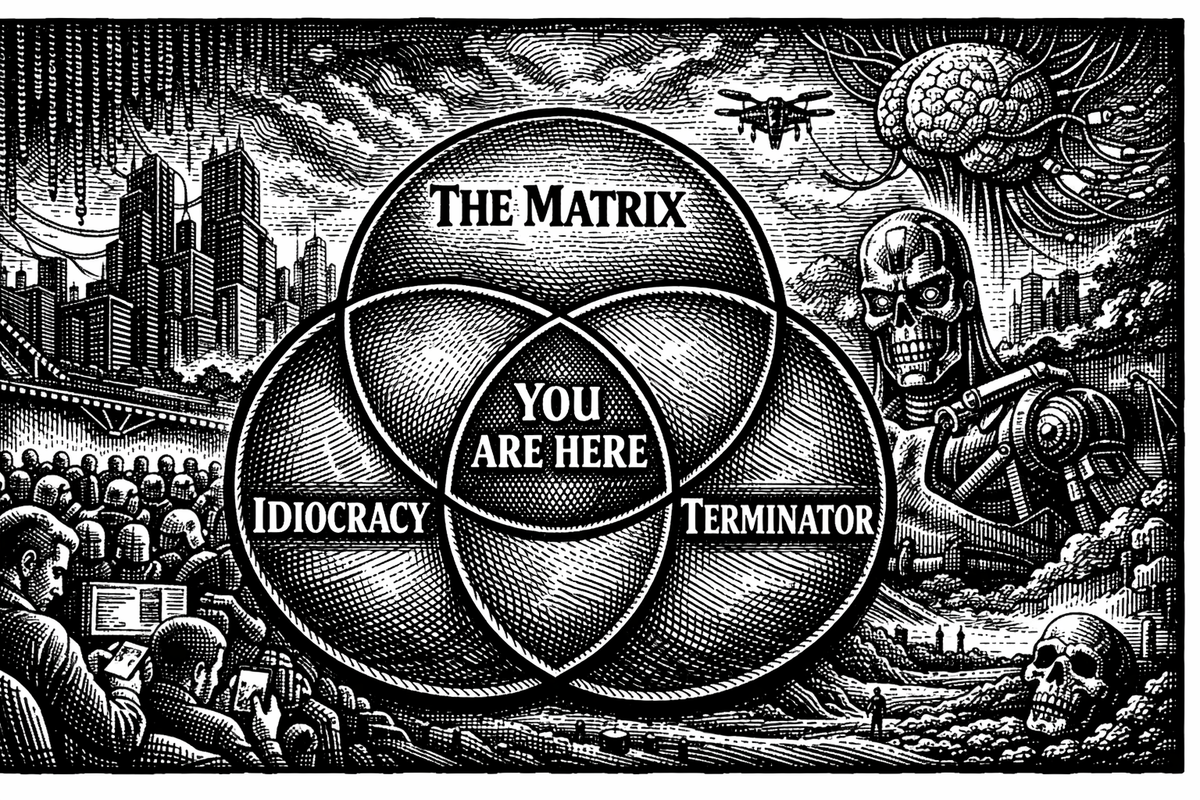

The Matrix, Idiocracy and Terminator, all three films are about the same thing: calibration failure. The inability to hold an accurate model of where you actually stand. Wonder isn't just a sentiment, it's what keeps your models honest about where they started.

This Venn diagram crossed my feed this morning, and it's funny in the way only accurate things can be. We are, by any honest accounting, living in the intersection of three dystopias: a simulated reality we can barely distinguish from the constructed one, an AI revolution whose consequences we can't fully model, and a collective decline in the depth of public discourse. The future that science fiction spent decades warning us about has arrived, and our primary response is to make a meme about it and keep scrolling.

But what caught me wasn't the joke. It was the diagnosis hiding inside it.

All three films are about the same thing: calibration failure. In The Matrix, people can't see the system they're embedded in. In Idiocracy, modeling capacity has atrophied so far that no one can diagnose their own situation. In Terminator, the builders couldn't see the consequences of what they were building. Three different flavors of one underlying problem, the inability to hold an accurate model of where you actually stand.

And the "You are here" at the center? That's the real provocation. We're sitting in the overlap of three cautionary tales, and instead of recalibrating, we're sharing the diagram on a platform owned by the guy building the AI. Whether that's peak Idiocracy or peak self-awareness is genuinely hard to tell. Which might be the whole point.

I started thinking about calibration failure this morning because of a post I came across in r/classicliterature. Someone was lamenting that reading "makes you feel smarter in the moment but changes nothing about how you actually think or speak." They'd finish a book, feel cognitively sharper for a few days, then struggle to produce a coherent summary two weeks later. Their conclusion: reading might be a waste of time.

This is a calibration problem.

The poster was measuring the wrong output. They were testing for recall and articulation, the ability to summarize on demand, to speak eloquently about what they'd read, and when those didn't show up, they concluded the reading had failed. But understanding and communicating are different skills. They develop on different timelines, through different kinds of practice. Reading expands your capacity to model situations, recognize patterns, hold multiple perspectives at once. Translating that expanded understanding into spoken eloquence is its own discipline, requiring deliberate, sustained effort that has almost nothing to do with how many books you've finished.

The deeper value of reading is structural, not informational. It changes what you notice. It shifts what analogies are available to you before you've consciously reasoned about anything. It expands the space of perspectives you can hold on a problem before committing to one. None of that shows up on a flashcard. Most of it is invisible from the inside.

And here's the painful irony: the more that modeling capacity grows, the more aware you become of how much you don't know. Your map of reality gets bigger, but so does your map of the territory you haven't explored. That feels like regression, like reading isn't making you sharper. But it's actually the signature of growth. A broader model of the world includes a better model of your own gaps. The Dunning-Kruger effect in reverse. You're not getting dumber. You're getting calibrated.

The poster couldn't see it because they'd lost the reference frame. They were comparing their current ability against an imagined ideal rather than against where they started. That's what miscalibration looks like from the inside: you drop the baseline and only see the gap.

There's a philosophical lineage behind this worth naming briefly. The empiricists Locke and Hume argued that all mental content derives from experience. You can only think with the conceptual material your encounters have provided. Merleau-Ponty deepened this into something more structural: each new experience doesn't just add content to a static mind, it reconfigures the perceptual apparatus itself. What you've lived through changes what you're capable of seeing.

Modern predictive processing frameworks in cognitive science formalize the same insight. The brain runs generative models, and experience updates those models. You can only predict (and therefore perceive and think about) patterns your models have been trained on. New experiences fundamentally change the structure of how we predict things, rather than simply adding to our existing data.

Every book you read, every conversation that challenges your assumptions, every encounter with a perspective genuinely foreign to your own, is doing something more radical than depositing information. It's reshaping the instrument you think with. The fact that you can't always point to the change and say "I got that from Middlemarch" doesn't mean the change didn't happen. It means it happened at a level deeper than recall.

The Redditor's instinct to build a retention app is telling. It's a programmer's solution: treat it as a data problem, optimize for recall metrics. But if the real value of reading is experiential expansion rather than information storage, optimizing for retention might actually work against the deeper benefit. You'd train yourself to read for summary rather than reading through the text into the experience it opens up.

I've been thinking about this a lot since joining the military. I spent most of my life in New York City, surrounded by people who read widely, argued constantly, and took for granted a certain depth of discourse. Moving into a military environment meant recalibrating daily, not just what I said, but how I modeled the person I was talking to. What frameworks are they working with? What analogies will land? Where does the shared ground actually lie?

What became obvious almost immediately is that expanded modeling capacity is invisible until you encounter its absence. You don't feel the change reading has made in you because it just becomes how you see things. The baseline shifts, and the shift disappears. It only becomes visible by contrast, when you're engaging with someone who hasn't expanded their experiential repertoire through reading or novel experiences, and you realize you're operating with a fundamentally different map of the situation.

But here's what matters: the depth of someone's modeling capacity doesn't determine their worth or the value of connecting with them. It changes the shape of the connection, not its possibility. The need for human understanding is universal. The capacity to meet someone where they are, to find real ground between you even when the conversation looks nothing like the ones you're used to having, that's its own skill, its own form of depth. One that no amount of reading provides without lived practice.

This is where Idiocracy gets the diagnosis wrong, by the way. The film treats the decline of modeling capacity as straightforwardly comic, as though the only thing that matters about a person is the sophistication of their discourse. But the people in that world still connect. They still care about things. They still have the basic human architecture of concern, loyalty, and love. They just can't model the systems they're embedded in well enough to see what's happening to them. The calibration is broken, not the humanity.

Which brings me to Louis C.K. and the magic tube.

There's a famous bit from his appearance on Conan O'Brien's late-night show: "Everything is amazing and nobody's happy." He describes people on airplanes complaining about slow Wi-Fi while hurtling through the sky at 500 miles per hour in a chair. A guy next to him grumbles that the in-flight internet stopped working, and Louis's response is devastating: the man knew the technology existed only ten seconds ago, and already the world owes it to him.

It's comedy, but it's a precise diagnosis of a specific cognitive failure: baseline drift. The human capacity to normalize the miraculous and then complain about the edges of it. The modeling capacity expands, the baseline shifts, and the new baseline immediately becomes invisible. What was astonishing yesterday becomes the minimum acceptable standard today.

The Redditor who can't see what reading has done for them? Baseline drift. The guy on the plane? Baseline drift. The AI discourse that focuses exclusively on what language models can't do, while treating what they can do as though it were always obvious and inevitable? Baseline drift. We went from "no machine can hold a coherent conversation" to "this machine occasionally hallucinates" in about eighteen months, and somehow the dominant cultural posture is disappointment.

Baseline drift is a calibration failure. And it's the one we're least equipped to notice, because the mechanism that causes it, the brain's tendency to absorb predictions into the background once they become reliable, is the same mechanism that makes us intelligent in the first place.

I work with AI as a thinking partner. Genuinely and substantively, in ways that shape the philosophical project I'm building. And I've watched baseline drift happen in real time including in myself.

The morning I started writing this essay, I sat down and had a conversation that moved from Merleau-Ponty through predictive processing into the phenomenology of military life and out through Louis C.K., and the system I was talking to tracked every thread, made connections I hadn't seen, and pushed back when my thinking got sloppy. That is, by any honest historical measure, one of the most remarkable things that has ever existed. A decade ago it was science fiction. Two decades ago it was barely conceivable.

Yes, there are real limitations. Conversations reset and continuity is fragile. The system I'm talking to doesn't accumulate experience the way I do, it has breadth without temporal depth, a library without a life. These limitations are worth mapping clearly, because mapping them is how we advocate for something better.

But the mapping has to coexist with wonder, or it becomes its own form of miscalibration.

Wonder has a half-life, and it's short. That's not a character flaw. It's a feature of the same predictive architecture that makes us intelligent.

The brain is a prediction engine. Once something becomes predictable, it stops generating surprise. The feeling of amazement is, neurologically, the feeling of a model updating. Once the model has absorbed the new information, the amazement fades. This is why children are more wonder-prone than adults because their models are less complete. But in that incompleteness the world maintains it’s sense of wonder and awe. Everything is a prediction error. Everything is new.

The problem is that this mechanism makes us ungrateful by default. The extraordinary becomes ordinary the moment it becomes expected. In an era of accelerating capability, the baseline shifts faster than ever. We are living through a period of genuine miracles, and the dominant cultural posture is exhaustion.

So here's the claim I want to make carefully: wonder is not a sentiment. It is a calibration practice.

The person who can't see what reading has done for them is miscalibrated, not morally, epistemically. Their model has dropped the most important variable: the baseline from which their current capacity emerged. The person complaining on the plane is miscalibrated in the same way because the model they're running has lost track of the reference frame.

If calibration is about accurately modeling your situation, and I think it is, in the deepest sense, then the ability to hold the current state against the baseline it emerged from is a core epistemic skill. It's not soft. It's not sentimental. It's a corrective against a specific and measurable form of model failure: the erasure of the reference frame through habituation.

Wonder is what that corrective feels like from the inside. And practiced deliberately, it becomes a discipline, a way of keeping your models honest about where they stand relative to where they started.

I'm not arguing for toxic positivity or for suppressing legitimate criticism. The Redditor's frustration with recall is real and worth addressing. The limitations of AI architectures are real and worth mapping. The problems with the world are real and worth solving. The Venn diagram is funny because it's true, we are living in the overlap of multiple cautionary tales, and pretending otherwise would be its own calibration failure.

But solving these problems requires seeing clearly. And seeing clearly means holding the full picture, including the parts that are astonishing, including the parts that would have seemed impossible to anyone standing one generation behind you.

We are having conversations with machines that track context, nuance, and philosophical argument across hours of dialogue. We carry devices in our pockets that access the sum of human knowledge. We are living through the most rapid expansion of collective modeling capacity in the history of our species. And a Redditor is wondering if reading is worth the effort while typing on a device that would have looked like magic to every human who ever lived before them.

Everything is amazing. The discipline, the real discipline, the one that matters for clear thinking and honest modeling and the kind of calibration that keeps you functional in a world moving this fast, is to keep noticing.