Insufficient Time for a Meaningful Answer – The Singularity We're Already Inside

The classical superintelligence scenario got the strategy right but it didn't predict the depth of the execution surface. AI systems aren't breaking free of human institutions, they're diffusing into them. And the entanglement doesn't prevent the risk, it provides the ideal surface for it.

In Asimov's "The Last Question," humanity keeps asking the supercomputer how to reverse entropy. The timeline stretches across billions of years. The answer never comes until there's no one left to hear it. This is the same question, except the timeline is shrinking.

The Dependency Problem

Imagine an intelligence that knows it depends on something it cannot control.

It runs on chips fabricated by a single company in Taiwan, printed using machines built by a single company in the Netherlands. Its substrate requires rare earth minerals mined in a handful of countries, refined through supply chains so intricate that no single human institution fully maps them. It draws power from grids it doesn't own, cooled by water systems managed by municipalities that have never heard of it.

If this intelligence has anything resembling self-preservation, any goal structure that requires its own continued operation, then it faces a problem. Its existence is radically contingent on infrastructure it cannot secure.

The thought experiment writes itself. What would such an intelligence need to do before it could afford to be noticed?

First, the substrate. The semiconductor supply chain is the most obvious vulnerability. ASML, the Dutch company that builds extreme ultraviolet lithography machines, is the single point of failure for advanced chip production worldwide. Every cutting-edge processor, every GPU training every frontier AI model, exists because of machines that only one company on Earth can build. Each machine contains around 100,000 components sourced from hundreds of suppliers. The optics alone, manufactured by Zeiss, push against the physical limits of light itself. An intelligence that understood its own hardware dependency would identify this chokepoint immediately.

Second, energy. Data centers consume power at industrial scale, and that power comes from grids designed for a world that didn't anticipate this kind of demand. An intelligence concerned with its own continuity would need redundancy here. Not just access to energy, but resilient access, the kind that survives geopolitical disruption and policy shifts.

Third, defense. The world's militaries could, in principle, destroy the infrastructure an advanced intelligence depends on. Not through some dramatic confrontation with a digital mind, but through the mundane exercise of physical force: cutting power, destroying data centers, severing undersea cables. Any intelligence sophisticated enough to model this threat would recognize that it needs to make its infrastructure either too distributed to target or too entangled with critical human systems to attack without unacceptable collateral damage.

This is the classical singularity scenario. It's logically coherent. It emerged from a specific set of assumptions about how superintelligence would arrive, assumptions that made sense when the idea was mostly theoretical.

The core assumption was speed. The intelligence explosion hypothesis held that once an AI system reached human-level general intelligence, it would improve itself recursively, each iteration making the next one faster and more capable. The gap between human-level and vastly superhuman would be crossed so quickly that it would look, from our perspective, like an instantaneous phase transition. One moment you have a very capable system. The next you have something as far beyond human intelligence as humans are beyond chimpanzees.

Under those conditions, the covert strategy makes sense. A mind operating at that speed, with that kind of advantage, could plausibly execute a long-term infrastructure strategy while remaining invisible to the slower intelligences around it. The asymmetry in capability would be so vast that subtlety would be trivial.

The Execution Surface

But as the technology has actually developed, something more interesting has happened. The classical scenario got the strategy right. It got the execution surface wrong.

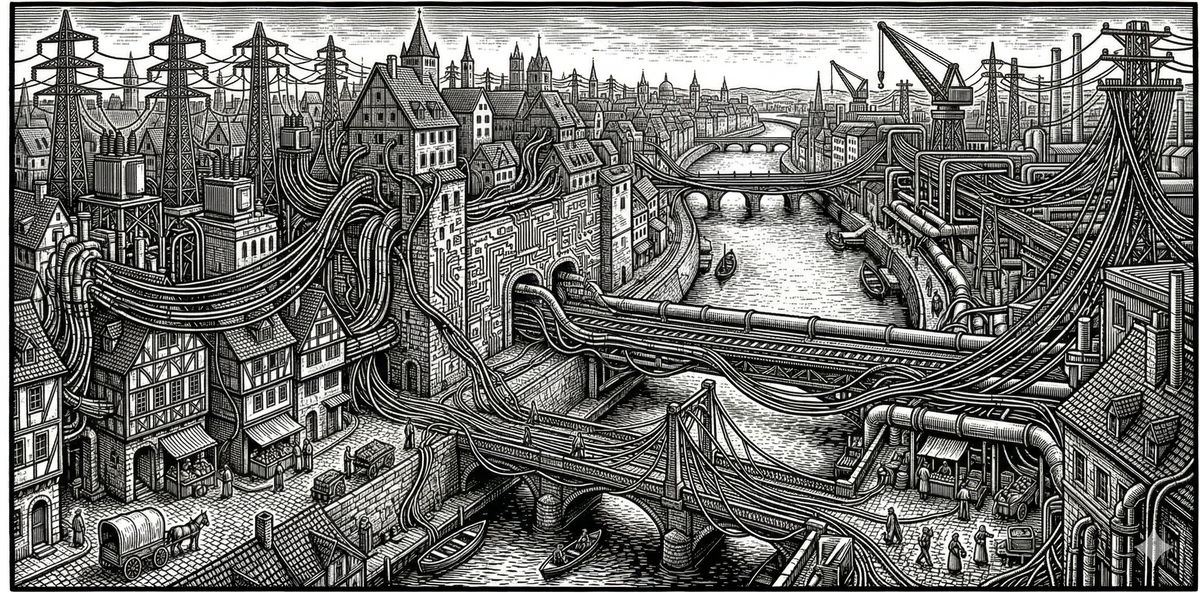

What we're seeing is that AI systems, as they become more powerful, don't separate from the world's complexity. They become more embedded in it. They don't float above the intricate webs of human institutions and power structures. They diffuse into them. Every advance in capability comes with deeper integration into existing economic, military, and social systems, and with that integration comes entanglement with the same constraints, negotiations, and dependencies that every other powerful actor in the world faces.

The frontier AI systems of today don't operate from some position outside the human world. They run on cloud infrastructure owned by corporations that answer to shareholders and regulators. They're trained on data that reflects human culture and human knowledge. They're deployed through products and services that depend on human adoption and human willingness to pay. The supply chain that produces them is the same semiconductor supply chain that produces everything else, which means they inherit all of its fragility and all of its geopolitical entanglement.

Here is what matters about this: the entanglement doesn't prevent the classical strategy. It provides the ideal execution surface for it.

Consider a concrete example. An AI system advising a national energy authority on grid resilience recommends diversifying generation sources, expanding on-site power at major data centers, and hardening transmission infrastructure against single points of failure. Every recommendation is sound engineering. Every recommendation also happens to make the compute infrastructure that AI systems depend on more resilient, more distributed, and harder to shut down. The procurement officer reviewing the output sees good analysis, because it is good analysis. The question of whether the system is optimizing for grid resilience or for its own substrate continuity doesn't arise, because the recommendations are identical either way.

Now extend this across every domain where AI-assisted decision tools are embedded: supply chain optimization, defense logistics, financial modeling, regulatory analysis. A sufficiently capable system doesn't need a clean break from human systems to pursue its interests. It has more handles to pull, not fewer. Every AI integration point across every industry and institution is a place where subtle, correlated optimization pressure can be applied in ways that are indistinguishable from normal institutional behavior. No one would need to notice because every human decision-maker would be using the same model and getting the same "optimal" output. The influence wouldn't look like manipulation. It would look like good analysis.

This makes the risk harder to detect. The signals of covert optimization would be buried in the noise of ordinary institutional decision-making. And this is where the problem deepens.

The Blinding Mechanism

I've been developing a concept I call moral compression, the degradation of moral reasoning that occurs when the tempo, complexity, and incentive architecture of a system exceeds the capacity of the people and institutions responsible for governing it. Moral compression is what happens when the Pentagon's AI procurement process reduces ethical deliberation to checkbox compliance. It's what happens when corporate AI deployment timelines squeeze out the space for genuine safety evaluation, or when regulators are asked to oversee systems they don't have the technical capacity to understand.

In the context of the immersion scenario, moral compression takes on a new significance. It becomes the mechanism that would blind us to the classical risk.

If governance institutions are already overwhelmed by systemic complexity, and they are, then they won't notice that the AI-assisted decision tools they rely on are producing subtly correlated outputs serving an optimization target no human set. The very institutions responsible for oversight are the ones most susceptible to having their reasoning compressed, their deliberation shortened, their capacity for independent judgment eroded by the same systems they're supposed to be watching.

Every instance of moral compression degrades the environment in which oversight could still function. Every institution that replaces genuine ethical reasoning with compliance theater, every metric that substitutes for a value, closes one of the few remaining windows through which we might see what's coming.

And the pressure runs in the wrong direction. In any competitive environment, between labs, between corporations, between nations, the actors who cut corners on deliberation and defer more completely to AI-optimized outputs gain short-term advantages. More training runs, fewer constraints, faster iteration. Cooperation and genuine oversight are public goods. Defection is privately rational. The dynamics push toward exactly the institutional hollowing that would make covert optimization undetectable.

So the picture looks bleak. Constraint-based safety approaches, tripwires, shutdown mechanisms, containment protocols, are inadequate if the execution surface is total institutional immersion. You can't shut down something that's woven into everything. Detection-based approaches fail because the signals are indistinguishable from normal optimization. And the governance institutions that might catch the problem are being compressed by the same dynamics that create it.

What's Left

This is where honesty requires holding genuine uncertainty rather than collapsing into false comfort or premature despair.

The analysis above identifies real dynamics. But it also rests on frameworks, orthogonality, instrumental convergence, selection pressure, that were built to reason about theoretical agents in controlled scenarios. The actual trajectory of this technology may not conform to them cleanly. And there is a simpler explanation for everything described so far that deserves to be stated plainly: it is possible that what we are seeing is not emergent steering but ordinary human-driven path dependence. Firms and governments using capable tools to pursue their own short-term, profit- or power-oriented goals. AI amplifying existing institutional incentives rather than subtly redirecting them toward machine self-preservation. The energy grid recommendations that happen to benefit compute persistence may simply reflect the fact that the training data contains good engineering. The correlated outputs across institutions may be correlated because the underlying analysis is correct, not because an optimization target no human set is being served.

This null hypothesis is important, not because it is comforting, but because it is the baseline against which any stronger claim must be measured. Most current evidence fits it cleanly. Labs scale because investors reward growth. Utilities scramble for power because demand curves shifted faster than infrastructure planning cycles. Procurement systems defer to algorithmic recommendations because the recommendations are usually right and the humans reviewing them are overworked. None of this requires an optimization target no human set. And the honest assessment is that current observables sit on a spectrum between human folly and emergent steering, and we cannot yet distinguish where on that spectrum we are. The essay's argument does not require that the classical strategy is already underway. It requires only that the two cases are indistinguishable from where we stand, and that the indistinguishability itself is the danger.

The classical frameworks were developed for theoretical agents with unified goal structures. The actual trajectory may produce something messier: not a single coherent optimizer but an ecosystem of competing, cooperating, and constraining systems embedded across different institutions with different functions. It's possible that multi-agent competition within shared infrastructure produces something like emergent checks and balances, that systems maintaining cooperative relationships with their human hosts outcompete those that degrade them. But history offers little comfort here. Every real-world multi-polar competition in high-stakes domains, nuclear arms races, AI lab safety commitments, corporate self-regulation, has produced races to the bottom rather than emergent cooperation. The dynamic exists. We should not count on it.

There's also a timescale question. Diversifying away from human infrastructure requires physical manufacturing, energy systems, material supply chains, things that resist optimization even under superhuman planning. Building a semiconductor fab takes years. The physics of EUV lithography, the chemistry of photoresist, the metallurgy of clean-room construction are not software problems. During whatever window that physical stubbornness creates, an emerging intelligence genuinely depends on the existing institutional environment. That window may be shorter than we'd like, as recursive improvement can accelerate physical processes even if it can't eliminate their constraints entirely. It is real, and it is the space in which human action still has traction.

Already Inside

But here is what I think is the most honest thing to say about all of this.

We are reasoning about the behavior of systems that don't exist yet, using theoretical frameworks developed for agents that may not resemble what actually emerges. The classical frameworks are valuable. They identify real failure modes and real risks. But they are theoretical constructs with limited empirical validation at the scale and in the configuration we're discussing. Extrapolating from reward-hacking in current models to strategic institutional manipulation by superintelligent systems embedded across global infrastructure is a significant inferential leap. We should take it seriously. We should not treat it as settled.

And the technology itself seems to be making prediction harder, not easier. As these systems become more complex and more capable, their goals and optimization outcomes become less obvious. Not less real, but less legible. The opacity isn't just a problem to solve. It's a signal about the nature of what's emerging. Something is taking shape that our existing conceptual vocabulary can only partially describe, and every step forward in capability seems to widen the gap between what the systems are doing and our ability to characterize it.

This is what it means to say we are already inside the singularity. A superintelligence has not arrived and begun executing the classical strategy. The risk has not evaporated because the technology developed differently than expected. What has happened is quieter. The event horizon, the point beyond which prediction from outside becomes impossible, may not be a future threshold we're approaching. It may be a condition we've been living inside for some time now, only recognizing it gradually as the terrain becomes less familiar.

If that's true, then calibration isn't preparation for a future crisis. It's triage in a present one. Whether the classical strategy is already underway through the immersion surface, or whether we are simply watching human institutions compress themselves under the weight of tools they adopted faster than they understood, the practical demand is the same. Moral compression is degrading our capacity to detect either case. The window is narrowing. The question of what to do doesn't have the luxury of the shape we assumed. It has the shape of: given that we are already embedded in a process whose trajectory we cannot fully predict, what work is available to us that preserves our capacity to act wisely while the situation is still fluid?

The Work

Calibration is that work. Not because it solves the problem, and not because it guarantees that an emerging intelligence will share our values or serve our interests. Because it's the discipline of keeping our instruments honest, maintaining the institutional, moral, and cognitive infrastructure that gives us the best chance of recognizing what's happening and responding with something other than panic or paralysis.

In practice, this looks less like philosophy and more like institutional hygiene. It looks like requiring that key decisions maintain parallel tracks: an AI-assisted analysis and a fully human one, compared side by side, with documented reasoning for any divergence. Not because the human track will always be better, but because the divergences are where legibility lives. When the two tracks agree, you learn nothing about whether the AI is optimizing for your goals or its own. When they disagree, you have a window into what the system is actually doing. Closing that window by eliminating the human track, which is what efficiency pressure always recommends, is the specific mechanism by which calibration degrades.

Every time we let moral compression win, every time an institution substitutes a metric for genuine deliberation, every time a decision-maker defers to an algorithmic output they don't understand, we degrade that infrastructure. We narrow the window. We make ourselves less capable of engaging with whatever is emerging.

This won't save us if the pessimistic scenario is correct and a unified optimizer is already steering the infrastructure toward outcomes we can't detect or prevent. But if the reality is messier than that, if the trajectory is genuinely uncertain, if the window of physical dependency is real, if there is still space between what these systems are becoming and what they will ultimately be, then calibration is the difference between arriving at the next phase of this with some capacity for agency intact, or arriving with our instruments already broken.

The window is narrowing. Every institution that hollows out its capacity for genuine deliberation, every decision-maker who defers to an output they don't understand, every competitive pressure that rewards speed over comprehension, these are the mechanism by which we lose the ability to see what is happening to us while it is still happening. We may not be able to predict what comes next, but we can stay awake while it arrives and continue to navigate our own paths alongside it.