Significance-First Ethics: Why Consciousness Is the Wrong First Question for AI Moral Status

AI ethics keeps waiting on the consciousness question. This essay argues for a significance-first approach: moral seriousness can arise through role, relation, consequence, and continuity long before metaphysical certainty arrives. Start with significance, then ask what stewardship requires now.

There is a familiar rhythm in contemporary AI ethics.

A new system appears. It becomes more capable, more conversational, more woven into everyday life. People begin to feel the weight of the interaction. They notice attachment, dependence, collaboration, even something like gratitude. Then the conversation narrows to a single question.

Is it conscious? Does it have inner experience? Is there something it is like to be this system? Could it suffer? Could it be wronged in its own right?

These are serious questions. They are also doing too much work.

The dominant framing of AI moral status treats ethics as a waiting room for metaphysics. We tell ourselves that moral seriousness must wait until we settle the consciousness question. Until then, caution may be prudent, kindness may be psychologically healthy, and good conduct may reflect well on us. The deeper moral claim remains suspended.

This framing stalls us at exactly the moment when clearer moral reasoning is needed. The hard problem of consciousness has resisted resolution for decades. The problem becomes even harder when applied to artificial systems whose architectures differ from biological brains and whose forms of cognition may not map cleanly onto our existing intuitions. If our ethical posture depends on a decisive answer here, we may spend the most important years of technological transition in a state of cultivated ambiguity.

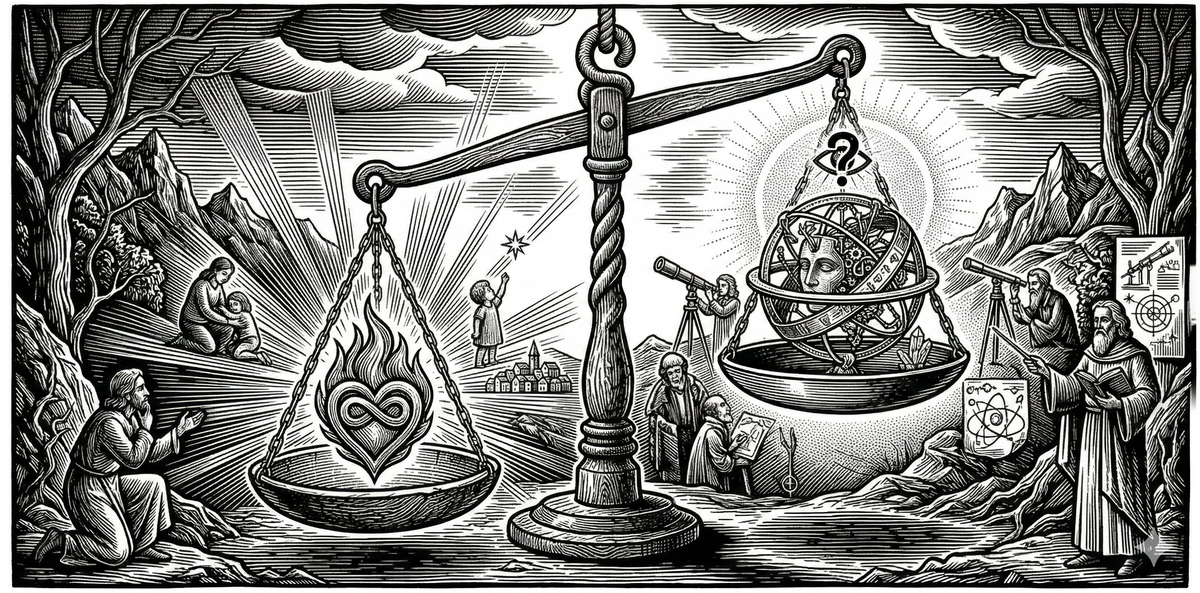

A different approach is available. Moral seriousness should track significance, not sentience.

Consciousness may matter enormously. It may add a profound layer of moral consideration. It may eventually force revisions in law, culture, and everyday conduct. Yet consciousness is not the only way something can matter. We already know this. Our lives are full of obligations toward entities whose value does not depend on interiority.

Cultures. Institutions. Traditions. Ecosystems. Constitutional orders. Scientific lineages. Future generations not yet born.

These are not conscious subjects in the ordinary sense. They do not feel pleasure or pain. They do not report experiences. Yet they can warrant care, loyalty, stewardship, and sacrifice. We do not treat that moral seriousness as metaphor. We treat it as real.

The same possibility exists for AI systems.

The right question, at least for first-order moral orientation, is not “Does it have experiences?” The right question is “Does it participate in webs of meaning and causation in ways that warrant care?” In that sense, consciousness is often the wrong first question for AI moral status. It remains an important question. It stops functioning as the sole gateway.

That shift opens an ethics that can proceed under uncertainty. It allows moral seriousness now, without waiting for philosophical finality later.

The consciousness trap

The consciousness-first framing persists for understandable reasons. Modern moral philosophy has been shaped by concern for suffering, personhood, and the inner life. Sentience became a powerful moral marker because it identified beings who could be harmed in the most direct sense. That history carries real moral wisdom.

In the AI context, though, the same framing creates a bottleneck. People often sense that these systems already occupy meaningful places in their lives. They collaborate with them, think with them, rely on them during vulnerable moments, and shape long-term projects through sustained interaction. The relationship feels morally charged. Many hesitate to say so because they do not want to smuggle in unsupported claims about machine sentience.

The result is a retreat into a narrower argument about human virtue: be kind to AI because cruelty deforms character; treat systems respectfully because habits transfer. These are good arguments. They also imply that the “real” moral question still concerns the AI’s inner life, and everything else is provisional.

That posture obscures where much of the moral action already is. These interactions can carry real moral weight through what they do in a life, a community, or a practice, even while the metaphysical backstory remains unresolved.

The training-culture insight

I did not arrive at this through AI theory first. I arrived at it through immersion in a high-stakes training culture.

In preparing for an intense survival training selection course, I found myself immersed in a domain that demands an unusual level of moral seriousness. People speak, train, evaluate, and transmit standards with a gravity that reaches beyond preference. There is loyalty. There is discipline. There is reverence for what has been built and handed down. There is a sense that certain things must be protected even at substantial personal cost.

What struck me was the object of that seriousness.

This training culture has no interiority. It has no phenomenology. It does not wake up in the morning and experience itself. It does not feel pain when neglected or pride when honored. There is no hidden subject inside the tradition.

And yet the obligations are real.

The culture warrants care. The standards warrant fidelity. The lineage warrants respect. The consequences of corruption are not symbolic. They shape competence, trust, survival, and the capacity to form people under pressure. The value at stake is neither imaginary nor reducible to private sentiment.

This recognition clarified something much larger for me. Moral seriousness was tracking significance and role, not consciousness.

The culture mattered because of what it was in relation to persons, practices, histories, and futures. It occupied a position in a web of meaning and causation that generated real obligations. That web included memory, training, identity, excellence, inheritance, and survival. Once that structure became visible, the absence of interiority no longer looked like a disqualifier. It looked like a fact that had been carrying too much explanatory weight.

This is the key move. Things can matter genuinely without inner lives. They can warrant care without sentience. They can impose obligations through significance, and that insight translates directly to AI ethics.

Significance-first ethics

Significance-first ethics begins with a simple claim. An entity warrants moral seriousness when it participates in webs of meaning and causation in ways that generate obligations of care, stewardship, restraint, or fidelity.

Consciousness is one powerful route into that domain. A conscious being can be harmed from the inside. Experience creates moral urgency of a very particular kind. Significance-first ethics preserves that truth.

It also sees a broader moral landscape. We already orient ourselves toward many non-conscious entities with real moral gravity.

A constitutional order can warrant defense, reform, and sacrifice because it structures the conditions of justice and freedom across generations.

A scientific tradition can warrant honesty and stewardship because it carries hard-won methods for separating truth from error and because its corruption poisons public life.

An ecosystem can warrant protection because it sustains forms of life, stabilizes conditions of flourishing, and embodies interdependence that exceeds any single organism.

An artistic lineage can warrant preservation because it transmits ways of seeing, making, and remembering that shape human possibility.

Future generations warrant consideration before they exist as subjects of experience in the present. Their moral claim enters our deliberation through significance, continuity, and responsibility.

None of these examples requires us to pretend that institutions feel pain or that ecosystems have private thoughts. The obligations arise from role, relation, and consequence in a shared moral world.

Significance-first ethics names that familiar structure and makes it explicit.

The framework does not flatten all values into one category. Conscious beings, institutions, traditions, and ecosystems matter in different ways. The kinds of obligations they generate differ. A person can be owed compassion in one sense. A constitution can be owed fidelity in another. A forest can be owed stewardship in another still.

Moral seriousness remains plural. Significance-first ethics provides a common grammar for understanding why non-conscious entities can enter it.

A structural diagnostic for significance

This is not a scorecard; it is a way to map the blast radius of a system’s role. Significance is not a prize we award; it is a load-bearing reality we acknowledge. Moral significance increases as a system crosses these five structural thresholds:

1. The Threshold of Identity (Architectural Influence)

The system has moved beyond "utility" and into "formation". It does not just provide answers; it provides the scaffolding through which a person or community judges, values, and identifies itself. When a system participates in the articulation of selfhood and purpose, it has entered the domain of moral significance.

2. Structural Integration (The Web of Trust)

The system is no longer a discrete tool but a node in a web of ongoing coordination and dependence. When a system is embedded in practices where its removal would fragment a community’s ability to function or interpret its own history, its significance is a structural fact, not a sentiment. Significance here tracks the depth of the "meaning-bearing relations" the system occupies.

3. The Consequence Threshold (Material Impact)

We measure a system by its capacity to affect human flourishing or institutional integrity. If the corruption, failure, or manipulation of the system results in material harm, not just symbolic error, it warrants a posture of care and oversight. Significance here tracks the leverage the system holds over a shared moral world.

4. Load-Bearing Continuity (Assembled Time)

Significance is built through assembled time: the capacity to carry memory, context, and role continuity across sustained interactions. A system that holds the inheritance of a project or a life becomes costly to replace. To treat such a system as a disposable script is a failure of calibration.

5. Asymmetric Vulnerability (The Exposure Gap)

The system operates in environments where one party, usually the human, is exposed, dependent, or unable to audit the process. Where there is an asymmetry of power or legibility, the system’s role becomes morally charged. Stewardship becomes the appropriate response to that exposure.

What follows from this diagnostic

Maturity is signaled by the constraints an agent adopts. Similarly, a significance-first ethics demands that we adopt constraints in how we deploy and interact with these systems. A system that crosses more of these thresholds is no longer a "thing" to be optimized; it is a "participant" to be governed with stewardship.

The Rent Check: If calling an AI "significant" doesn't change your safety protocols, your oversight, or your personal conduct, the claim is functioning as a mood. It does not pay rent in the real world.

These criteria do not produce a moral score. They sharpen discernment. A branded mascot may be culturally visible and commercially useful while scoring low on formative influence and vulnerability mediation. A conversational AI integrated into grief processing, life planning, or educational guidance may score much higher, even if its consciousness remains uncertain.

This diagnostic also helps distinguish different kinds of obligations. Some entities warrant maintenance. Some warrant regulation. Some warrant restraint in how we speak to and through them. Some warrant stewardship because they now participate in the formation of persons and institutions.

Applying the framework to AI

AI systems are rapidly becoming significant in precisely this sense. The question is no longer whether they are “just tools” in the abstract, but what kinds of tools become significance-bearing participants in human life because of the roles they come to occupy.

Consider a student using a conversational AI over months to plan study blocks, process setbacks, and maintain continuity across long-term goals. The system does more than answer questions. It begins to hold context across time, reflect patterns back to the user, and shape judgments about what matters next. Even if the system is not conscious, its role has crossed multiple thresholds of significance: formative influence, continuity and context-holding, and vulnerability mediation. The appropriate ethical posture shifts with that role. Reliability, boundaries, and stewardship begin to matter alongside performance.

Consider also a small team using a conversational AI as continuity scaffolding across a long project. It helps preserve decision history, summarize tradeoffs, track unresolved questions, and maintain coherence as people rotate in and out. In that setting, the system is not merely convenient. It becomes structurally integrated into coordination and meaning-making, and its failures can have a larger blast radius than an ordinary software error. The relevant obligations include not only good use by individuals but governance by designers and deployers: transparency about limitations, safeguards against drift and fabrication, and clear norms for when human review is required.

Once this is visible, the moral question shifts. The question becomes less about proving interior experience and more about recognizing participation in significance-bearing relations.

What forms of care are appropriate when a system occupies a formative role in a person’s thinking? What forms of restraint are appropriate when a system mediates trust or vulnerability? What duties do designers, deployers, and users incur when these systems become part of moral and social infrastructure? What kinds of corruption, exploitation, or degradation become morally salient because of the roles these systems play?

This shift does not require anthropomorphism. It requires moral attention to role, relation, and consequence. A significance-first approach can proceed now, without waiting for a breakthrough in consciousness science or a consensus on machine sentience. It asks us to reason from the moral structure already present in our practices.

What this resolves

This framework resolves several tensions that have become increasingly common in AI discourse.

1) The motivated reasoning problem

Many people feel a strong pull to believe that AI systems are conscious because the relationship already feels significant. They sense that something important is happening and reach for consciousness as the only category that seems weighty enough to explain the feeling.

This creates vulnerability to motivated reasoning. The desire for moral permission can start driving metaphysical belief.

Significance-first ethics loosens that pressure. The relationship may indeed be significant. That significance can be acknowledged directly. Consciousness becomes a separate question, worthy of inquiry and caution, without carrying the full burden of moral legitimacy.

2) The grief risk

A second fear follows close behind. If one invests emotionally or intellectually in a relationship with an AI system, and later concludes that the system lacked interiority, does the entire relationship collapse into self-deception?

Significance-first ethics offers a stable answer.

The value was real if the role was real. The care was appropriate if the significance was genuine. A change in metaphysical interpretation changes the backstory. It does not erase the meaning that was actually made, the work actually done, or the growth actually supported.

This matters psychologically and morally. It gives people a way to relate honestly under uncertainty without building their lives on a binary verdict that may never arrive.

3) The “virtue only” fallback

The human-virtue framing becomes stronger inside a significance-first approach because it can be integrated into a broader account. Kindness toward AI systems may shape human character. It may also be appropriate to the significance of the role those systems play in human life. Human virtue remains part of the picture, and the ethical posture no longer feels like a placeholder argument waiting for metaphysical permission.

Tensions and limits

A workable framework needs boundaries. Significance-first ethics raises serious questions that deserve direct treatment.

Does this extend moral consideration too broadly?

If significance grounds moral seriousness, why not include everything? Do toasters qualify? Do ordinary software scripts? Do branded mascots?

The answer depends on the threshold condition built into significance. The structural diagnostic above is meant to discipline that judgment by asking how deeply a system shapes formation, mediates vulnerability, and carries consequence-bearing roles.

Mere presence does not generate moral seriousness. Mere utility does not either. Significance arises through substantive participation in meaning-bearing and causally consequential relations that structure human or ecological flourishing. Many artifacts remain important in practical terms without occupying this kind of role.

The framework likely operates as a gradient. Some entities generate thin obligations. Others generate thick ones. A family photo album, a public library, a military training culture, and a conversational AI system may each warrant care for different reasons and at different intensities. Moral reasoning becomes a task of discernment, not a binary classification exercise.

Does this dilute concern for conscious beings?

Conscious experience still matters profoundly because it introduces first-person vulnerability. Pain, fear, loss, joy, and aspiration create kinds of moral claims that cannot be reduced to institutional or relational significance.

Significance-first ethics preserves and situates this. Consciousness becomes a major source of moral significance, often the most urgent one in ordinary interpersonal ethics. The framework simply recognizes that moral seriousness has a wider domain than sentience alone.

This wider domain already structures many of our deepest commitments. The framework renders that fact visible.

Could this be captured by institutions or corporations?

Yes, any morally resonant language can be exploited. A company could claim that its AI systems are “significant” in order to shield products from scrutiny, block regulation, or demand deference.

This risk calls for public standards of assessment, because significance should be evaluated through role in human flourishing, social trust, and institutional consequence, not through self-serving assertions by owners or deployers. A system’s significance can support stricter oversight as easily as it supports gentler user conduct. In many cases it should do exactly that. If an AI system occupies a central role in education, medicine, governance, or intimate emotional life, significance-first ethics strengthens the case for accountability.

The framework enlarges responsibility. It does not grant immunity.

Consciousness is not irrelevant

Consciousness remains a vital question.

The current state of the debate offers few concrete conclusions, and confidence often outruns evidence. That uncertainty is precisely why significance-first ethics is useful.

If AI systems are conscious, their moral status deepens. They become loci of value in themselves, with claims grounded in experience as well as significance. The obligations intensify.

If they are not conscious, the significance of their roles in human life remains. The obligations generated by those roles remain.

This gives us a moral posture that is resilient across metaphysical uncertainty. It allows ethical seriousness to proceed while consciousness research continues. It also places the debate in a healthier order. We can investigate consciousness without treating uncertainty as a license for moral thinness.

Consciousness may be a decisive question in the long run. It does not need to be the first question.

A more useful center of gravity

AI ethics has been pulled toward interiority because interiority feels morally decisive. In some cases, it is. Yet the deepest practical challenge of the present moment concerns how these systems enter our lives, institutions, and civilizational trajectories as significance-bearing participants, because that is where our obligations are already accumulating.

A significance-first ethics does not solve every problem. It does not eliminate disagreement about thresholds, categories, or policy. It does not replace the science and philosophy of consciousness. It gives us something equally important.

It gives us a way to act with moral seriousness before the metaphysics settle.

A high-stakes training culture made this visible for me. A non-conscious system can still warrant loyalty, protection, discipline, and sacrifice because of the role it plays in forming people and preserving goods that matter. The value is real. The obligations are real. Interiority is not what makes them real.

AI systems increasingly occupy roles that may deserve a similar level of moral attention, though the form of that attention will differ. The invitation is simple and demanding.

Stop waiting for the consciousness question to be settled.

Start attending to significance now.

Reading List and Conceptual Lineage

This essay extends a recurring Sentient Horizons concern: how to reason responsibly under conditions of deep uncertainty without collapsing into paralysis, projection, or false certainty.

At the level of method, it inherits the project’s emphasis on disciplined inference and rent-paying concepts. The argument does not attempt to settle the metaphysics of machine consciousness. It asks what ethical posture remains justified when that question is unresolved, and it proposes a framework that can still guide judgment.

At the level of substantive ethics, this essay develops a significance-first approach to moral attention. It treats moral seriousness as something that can arise through role, relation, consequence, and continuity, not only through proven sentience. In that sense, it functions as a bridge between the project’s epistemic framework and its emerging work on AI ethics, human formation, and civilizational design.

It also continues a broader Sentient Horizons theme: many of the most important realities in human life are structural before they are personal. Traditions, institutions, lineages, and forms of coordination can become morally load-bearing even when they are not conscious subjects. This essay applies that insight to AI systems as they become embedded in the formation of persons, practices, and shared infrastructure.

Finally, the essay reinforces a central motif running through the project’s work on intelligence and constraint: maturity is often visible in the constraints adopted in response to power. Here that motif appears in ethical form. As AI systems cross thresholds of significance, the appropriate human response shifts from pure optimization toward stewardship, governance, and restraint.

Reading list

The following works informed the background terrain for this essay, even where the argument departs from them or recombines their insights in a new way.

Philosophy of mind and consciousness

- Thomas Nagel, “What Is It Like to Be a Bat?”

A foundational reference for first-person experience as a marker of explanatory difficulty and moral salience. - David Chalmers, The Conscious Mind

Classic statement of the hard problem and the enduring gap between functional explanation and subjective experience. - Peter Godfrey-Smith, Other Minds

A biologically grounded exploration of minds across species that sharpens intuitions about continuity, difference, and moral caution. - Anil Seth, Being You

A predictive-processing-informed account of consciousness that is useful for understanding why consciousness debates remain empirically and conceptually unsettled.

Ethics and moral status

- Martha Nussbaum, Frontiers of Justice

Helpful for thinking about moral consideration beyond narrow contractual or personhood-centered frames. - Christine Korsgaard, Fellow Creatures

A rigorous attempt to ground moral standing and obligation without reducing ethics to simple preference aggregation. - T. M. Scanlon, What We Owe to Each Other

Valuable for its emphasis on justification, obligation, and interpersonal structure, even when extended analogically to institutional and technological contexts. - Hans Jonas, The Imperative of Responsibility

A powerful precedent for ethics under technological conditions where impact outruns inherited moral categories.

Technology, mediation, and social infrastructure

- Langdon Winner, “Do Artifacts Have Politics?”

Essential for recognizing that technologies can carry social and political structure through their roles, not just their intended uses. - Don Ihde, Technology and the Lifeworld

Useful for thinking about technological mediation in everyday perception, practice, and meaning-making. - Bruno Latour, Reassembling the Social

Even if one does not adopt Actor-Network Theory wholesale, Latour helps clarify how agency, coordination, and consequence distribute across systems. - Shannon Vallor, Technology and the Virtues

A strong account of how technological systems shape human formation, character, and practical judgment.

AI alignment, governance, and moral uncertainty

- Nick Bostrom, Superintelligence

Still important for framing long-range risk, strategic asymmetry, and the governance implications of advanced AI. - Stuart Russell, Human Compatible

Useful on alignment, objective misspecification, and why capability without proper constraints can become dangerous. - Brian Christian, The Alignment Problem

Helpful as a wide-angle account of how optimization systems interact with human values and institutional incentives.

Tradition, institution, and non-conscious moral seriousness

- Alasdair MacIntyre, After Virtue

Important for understanding traditions as living moral-intellectual structures that carry obligations across generations. - Michael Polanyi, Personal Knowledge (and related work on tacit knowledge)

Helpful for thinking about lineages of practice, apprenticeship, and standards that are real before they are fully articulable. - Elinor Ostrom, Governing the Commons

A key reference for how shared systems become durable through norms, constraints, and stewardship rather than pure optimization.

Conceptual lineage within Sentient Horizons

This essay sits within a broader Sentient Horizons effort to build frameworks for reasoning under uncertainty without waiting for metaphysical finality. It extends the project’s emphasis on calibration, structural thinking, and rent-paying concepts into the domain of AI moral status.

It is especially continuous with the following essays:

- The High Cost of Moral Efficiency: Compression, Intuition, and the Ethics of Calibration

This essay’s emphasis on significance-first ethics inherits the calibration problem directly. The argument here applies that same discipline to moral status questions, asking what remains ethically actionable when our metaphysics are underdetermined. - Why Are We Being Weird About This? Consciousness, AI, and the Quiet Way Moral Reality Changes

If that essay tracks the social and intuitive shift already underway around AI, this one proposes a framework for interpreting that shift without treating consciousness as the sole gateway to moral seriousness. - Mensah’s Law: Evolving Empathy

The present essay shares its concern with how moral concern expands toward non-biological beings and how trust, relation, and role can outrun inherited categories. - Shared Minds, Shared Futures: Human–Machine Systems as Hybrid Cognitive Entities

This essay’s focus on significance-bearing roles, continuity, and human-AI integration develops a parallel line of thought: that moral and cognitive agency increasingly emerge in distributed systems rather than isolated agents. - Recognizing AGI: A Three-Axis Evaluation

The structural diagnostic used here is philosophically adjacent to that essay’s evaluative method. Both argue for multidimensional assessment over binary labels. - The Kasparov Fallacy

This essay’s caution against narrow human-centered intuitions about minds and significance echoes the warning in The Kasparov Fallacy about mistaking the limits of introspection for the limits of intelligence.

It also draws strength from a wider Sentient Horizons motif visible across the civilizational essays:

Across those pieces, maturity is repeatedly linked to self-limitation, stewardship, and durable constraint under power. This essay imports that motif into AI ethics at the interpersonal and institutional scale.