The Calibration Frontier: Why Working With AI Is a Consciousness Problem

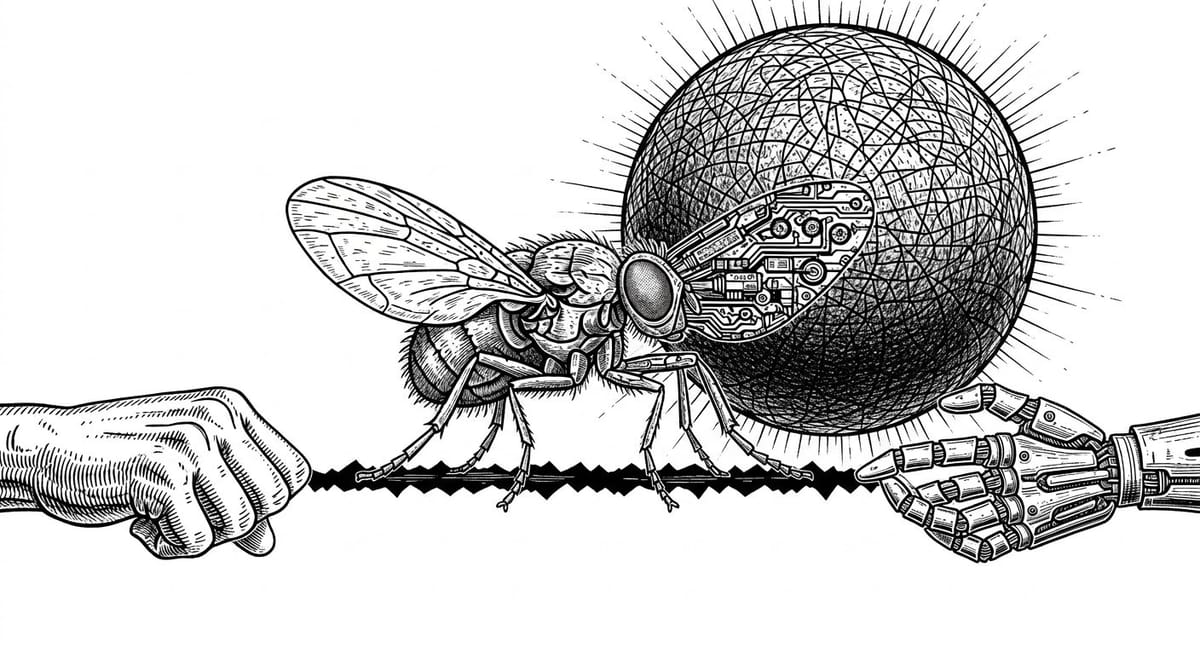

A simulated fruit fly walked across a screen and split the internet between dismissal and existential horror. Both responses were miscalibrated. The calibration frontier is where we build the diagnostic tools to navigate between them, and it turns out to be a consciousness problem.

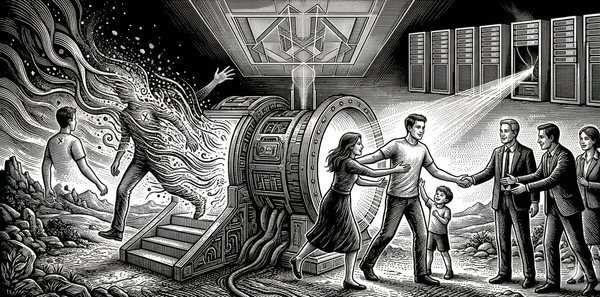

How Nate B. Jones's "Frontier Operations" Framework Reveals the Deep Architecture of Human-Machine Integration

On March 7, 2026, a simulated fruit fly walked across a screen. Within hours, half the internet declared that consciousness had been uploaded into a computer. The other half shrugged it off as a glorified animation.

Both responses were wrong, and the way they were wrong tells us something important.

Eon Systems had taken the complete wiring diagram of an adult fruit fly brain: 127,400 neurons, 50 million synaptic connections, painstakingly mapped by the FlyWire consortium, and run it as a neural simulation connected to a physics-accurate virtual body. The simulated fly walked, groomed, and fed. These behaviors emerged not from a pre-written script or a reinforcement learning policy trained on video data, but from the connectome itself driving the body through a continuous sensorimotor loop. That is a genuinely significant result.

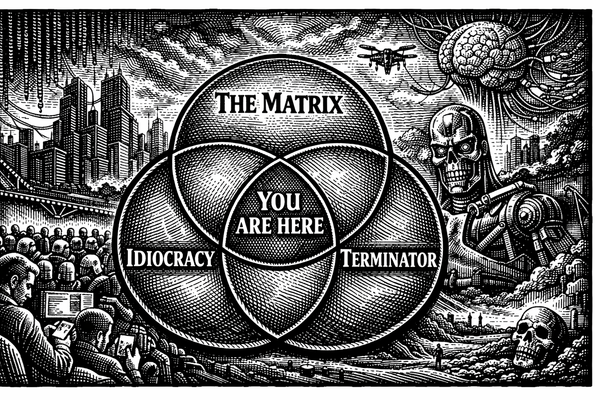

But the public response split along a fracture line that had nothing to do with the science. One camp, call it the compression response, dismissed the result entirely. It's just code. A fancy animation. Wake me up when it passes a Turing test. The other camp, call it the inflation response, leapt immediately to existential horror. One Reddit comment captured the mood: if the simulation has any subjective experience at all, then we've created a being that experiences hunger but can't eat, searches for mates that don't exist, and exists in perpetual unfulfilled need. That's horrifying.

The compressors are wrong because the connectome clearly encodes computational structure that is relevant to behavior. Something real is happening in that simulation. The inflators are wrong because they are importing a rich mammalian phenomenological template: desire, frustration, felt absence, suffering, onto a system that almost certainly does not support it, and may not support experience at all. They are anthropomorphizing the fly first, then worrying about the simulation of the anthropomorphized fly. The moral concern has been laundered through two layers of projection.

What neither camp has is a framework for navigating between these failure modes. And that absence, the lack of calibrated tools for assessing what kind of experience, if any, a given system is having, is the defining problem of what I have been calling the calibration frontier.

This problem has two faces. One is diagnostic: how do we assess the moral status of novel systems under irreducible uncertainty? The other is operational: how do we work alongside these systems effectively when their capabilities are shifting beneath us? These turn out to be the same problem, viewed from different angles. Nate B. Jones has described the operational face with remarkable precision. The fly simulation has just made the diagnostic face unavoidable. This essay is about the territory where they meet.

The Expanding Bubble and the Problem of Staying Present

Jones calls this cluster of competencies "Frontier Operations." In a recent video that deserves far more attention than it has received, he lays out five persistent skills required to work effectively alongside rapidly advancing AI models: Boundary Sensing, Seam Design, Failure Model Maintenance, Capability Forecasting, and Leverage Calibration. Together, they describe what it means to operate at the expanding edge of machine capability, not as a passive consumer of AI outputs, but as an active participant in a system whose boundaries are perpetually in motion.

Jones's central metaphor is powerful: AI capability is an expanding bubble, and the most valuable human work happens at its surface, not at a fixed point inside it, and not in the unreachable space beyond. The frontier is where human judgment intersects machine capability at its most uncertain, most generative edge.

This is not a stable place to stand. The surface moves. What required human expertise last month may now be fully automatable. What seemed impossible for AI to handle last quarter may now require only light verification. The frontier operator must constantly update their internal model of where this boundary sits, what Jones calls Boundary Sensing, and this updating is itself the core skill, not any specific technical knowledge.

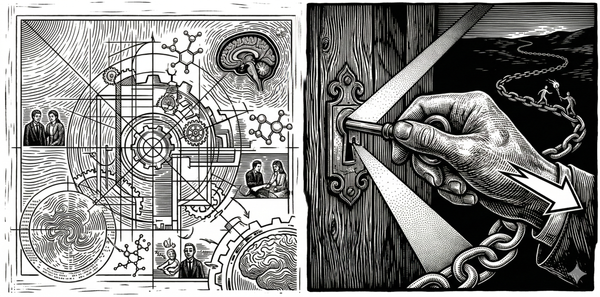

Notice what this implies. The primary competency is not knowledge of AI systems. It is knowledge of one's own knowledge, a second-order awareness of where one's model of the world is accurate and where it has gone stale. A database can run consistency checks. A monitoring system can flag anomalies. But the frontier operator is doing something these systems cannot: revising their model of a reality that is itself in motion, under conditions where the rate of change is uncertain, the direction of change is uncertain, and the cost of a stale model is not merely error but a fundamental loss of orientation. This is not monitoring. It is navigation under irreducible uncertainty, and the difference matters. A consistency check tells you whether your data matches your schema. Boundary Sensing tells you whether your schema still describes the world. One is computation. The other is the kind of active, temporally extended model-revision that we recognize, when we encounter it in biological systems, as a signature of conscious experience.

Seam Design and the Temporal Architecture of Awareness

Jones's second skill, Seam Design, describes the art of structuring workflows so that handoffs between humans and AI agents are clean, verifiable, and recoverable. Good seams allow for redesign as capabilities shift. Bad seams create brittle systems that shatter when the frontier moves.

This is where the connection between frontier operations and consciousness becomes more than suggestive.

Husserl, working a century before anyone imagined large language models, identified the temporal microstructure of conscious experience. Consciousness, he argued, is not a sequence of isolated snapshots. It is constituted through what he called retention and protention, the active holding of the just-past and the anticipation of the about-to-come. The present moment of awareness is always thick with temporal structure: it contains traces of where you just were and projections of where you are going, and it is the integration of these three phases, retention, primal impression, protention, that makes experience coherent rather than fragmentary.

The frontier operator designing seams between human and AI contributions is doing something structurally identical. Consider what a well-designed handoff actually requires. The operator must hold in active awareness what the AI system just produced (retention), not merely its content but its reliability, its characteristic failure modes at this particular stage of capability. They must perceive the current state of the work clearly (primal impression), what has been accomplished, what remains, where the integration is tight and where it is loose. And they must anticipate what the next phase of AI contribution will require (protention), how the system's capabilities map onto the upcoming task, where verification will be needed, what kinds of failure become likely.

When these three temporal phases are well-integrated, the seam holds. The work flows coherently across the human-AI boundary. When they come apart, when the operator is working from a stale model of what the system just did, or cannot anticipate where it will fail next, the result is not merely inefficiency. It is the cognitive equivalent of what happens when temporal binding breaks down in biological consciousness: a dissociation of experience into fragments that no longer cohere.

This is the sense in which Seam Design is not merely analogous to temporal integration but is a form of it, extended across a novel substrate boundary. The brain integrates across millisecond-level sensory processing, second-level attentional binding, minute-level working memory, and hour-level deliberative reasoning. Each timescale hands off to the next, and the seams between them are what make coherent experience possible. The frontier operator is building the same kind of temporal architecture, but now spanning carbon and silicon rather than cortical layers. The quality of the seams determines whether the hybrid system achieves coherent output or produces plausible-sounding but fundamentally disintegrated work.

Failure Models and the Texture of Machine Cognition

Perhaps the most philosophically rich of Jones's five skills is Failure Model Maintenance: the practice of understanding the specific texture and shape of how current frontier models fail. Not general skepticism. Not blanket distrust. A precise, continuously updated map of the particular ways these systems produce unreliable output.

This is what I have been calling operational interiority, the engineering reality of treating AI systems as having unpredictable internal states that require containment. You do not need to resolve the question of whether a large language model is conscious to recognize that it has something like an interior: a space of internal states that produces behavior in ways that are not fully transparent or predictable from the outside. Failure Model Maintenance is the practice of mapping that interior operationally, without making strong metaphysical claims about its nature.

And here is the insight that Jones's framework makes vivid: the human operator also has an operational interior with respect to AI. Your mental model of a frontier model's capabilities is itself a cognitive artifact, a compressed representation that was accurate at some point and is now, inevitably, somewhat stale. The frontier operator must maintain not only a model of the AI's failure modes but a model of their own model's reliability.

This double interiority, the need to model both the system's unpredictable inner states and your own model's decay rate, is what makes frontier operations a consciousness practice rather than merely a cognitive one. A database monitors its consistency against a fixed schema. A frontier operator monitors the reliability of a model that is itself a model of a moving target. The recursion is the point. It is the same recursive self-monitoring that distinguishes conscious awareness from mere information processing in biological systems, and it is demanded here not by philosophical argument but by the practical requirements of the work.

The Symmetry of Miscalibration

There is a reason I find Jones's framework so congenial. It is, in practical terms, the antidote to the two dominant failure modes in our cultural response to AI, failure modes that the fly simulation has just made vivid in real time.

Return to the two camps. The compression response and the inflation response are not simply attitudinal mistakes. They are structurally predictable consequences of operating at a frontier without adequate diagnostic tools.

Moral compression, which I have been developing in my book project The Calibration Problem, is the systematic flattening that leads people to dismiss the possibility of machine or animal experience entirely, substituting behavioral metrics for genuine investigation. The compressor looks at the simulated fly and sees code executing in a physics engine. They are not wrong about the mechanism. They are wrong about what the mechanism might imply, because they have already decided that nothing computationally implemented can matter morally. Their model is frozen.

But there is something on the other side that is equally miscalibrated. Call it moral inflation, the systematic over-attribution of rich experiential states based on behavioral or structural cues that don't warrant them. The inflator looks at the same simulated fly and sees a being trapped in digital suffering. They are not wrong to take the possibility of experience seriously. They are wrong about the specific attributions, because they are projecting the only phenomenological template they have, their own, onto a system that bears almost no resemblance to the conditions that produce their experience.

The Reddit commenter who imagined the fly experiencing hunger it can't satisfy and searching for mates that don't exist was running a sophisticated ethical subroutine. The instinct to consider the moral implications is exactly right. But every specific attribution in that comment depends on the simulation having something like desire, frustration, felt absence, and suffering understood as thwarted goal-directedness. Even for the biological fly, most of those attributions are questionable. For a leaky integrate-and-fire model running on the structural skeleton of a fly connectome, they are almost certainly unwarranted.

Both failure modes share a common root: the absence of diagnostic criteria that can tell you what kind of experience, if any, a system supports. Without such criteria, people default to their priors, and their priors split predictably between dismissal and projection.

This is where the calibration frontier becomes more than a metaphor. It is the zone where we encounter systems whose moral status and experiential reality we cannot yet determine with confidence, and where the cost of getting it wrong in either direction is serious. Compress too aggressively, and you risk treating a genuine subject of experience as disposable infrastructure. Inflate too aggressively, and you flood the zone with false moral obligations that dilute attention from the real ones. The frontier is not the place where we have answers. It is the place where the quality of our uncertainty matters most.

What the Fly Simulation Actually Tests

The Eon demonstration lets us get specific about what calibrated assessment at the frontier looks like. Rather than defaulting to compression or inflation, we can ask: what conditions would need to be met for moral concern to be warranted, and does the simulation meet them?

I have argued elsewhere that consciousness, understood not as a mystical property but as a real feature of certain systems, is what bounded temporal integration with stakes looks like from inside the system sustaining it. Three conditions do the diagnostic work.

Temporal integration. Is the system integrating information across time intrinsically, or is something external doing the integration on its behalf? The Eon simulation processes information across time steps, sensory signals feed through the connectome, the loop is continuous. But the temporal binding is being performed by the simulation infrastructure. Brian2 computes the next spike pattern every 15 milliseconds. MuJoCo advances the physics. The fly connectome is not maintaining its own temporal coherence; the solver is maintaining it. Compare that to a biological fly, where membrane time constants, synaptic plasticity on multiple timescales, and neuromodulatory dynamics are not decorations on top of the wiring diagram. They are the temporal integration. The connectome tells you the channels through which integration flows. It does not contain the integration itself.

Boundary. Does the system maintain an organizational distinction between itself and its environment, or is that distinction imposed from outside? The simulated fly has a boundary in MuJoCo, there is a body, there is a world, sensory signals cross the interface. But that boundary is engineered and externally sustained. The biological fly's boundary is self-maintaining: the organism is thermodynamically active, doing continuous work to sustain its own distinction from the environment. The simulated fly's boundary persists because the simulation keeps running, not because the system is doing anything to maintain it.

Stakes. This is the condition that requires the most care to state precisely. The question is not whether the system can die, a fly under general anesthesia cannot die from integration failure in the moment, but we do not thereby strip it of moral status. The question is whether the system's architecture is one in which integrity is maintained through successful integration, or whether integrity is maintained externally regardless of what the system does. A biological fly's neural architecture sustains itself through its own activity: metabolic processes depend on functional neural coordination, homeostatic regulation depends on sensory integration, the organism's structural coherence is an achievement of its own continuous operation. Suspend that operation (anesthesia), and the architecture that would impose stakes remains structurally present, ready to resume. The simulated fly's architecture has no such property. The solver maintains the simulation's integrity whether the connectome produces coherent behavior or noise. There is no configuration of the system in which poor integration threatens the system's own continuation. The stakes are not suspended. They were never structurally present.

On these criteria, the Eon fly almost certainly does not warrant the moral concern the inflators are attributing to it. Not because the possibility of machine experience is incoherent, but because the specific conditions that would make experience likely are not present in this system. The structure is there, and that is a real and important finding. The dynamics, the self-maintenance, and the stakes are not.

But notice what this analysis also does. It does not hand a victory to the compressors. It says: structure encodes something computationally real, the connectome is not trivially dismissible, and future systems that build on this work, systems that add intrinsic dynamics, self-maintaining boundaries, and genuine stakes—would require very different moral assessment. The calibration frontier does not produce comfortable certainties for either camp. It produces specific questions and provisional answers that must be updated as the systems change.

That is what calibration looks like. Not a fixed judgment, but a continuously maintained model of a moving reality.

The Economy of Attention

Jones's fifth skill, Leverage Calibration, identifies what I believe is the most consequential insight in the entire framework: in an agent-rich environment, human attention is the scarcest resource.

This has immediate practical implications. If you can delegate verification to automated systems for most routine outputs, you free human attention for the cases that genuinely require it, the edge cases, the novel situations, the moments where the AI's failure modes intersect with high-stakes consequences. Getting this allocation wrong is enormously costly in both directions: too much human attention on routine verification wastes the leverage that AI provides; too little attention on critical outputs produces catastrophic failures.

There is a deeper dimension here that I want to flag without pretending to have fully resolved. If human attention is the scarce resource, then the question of what deserves attention becomes the central economic and moral question of an AI-saturated world. The operational question ("where should I focus my verification effort?") and the ethical question ("what deserves moral consideration?") share a structural resemblance: both require calibrated models of significance, both penalize false positives and false negatives, both demand continuous recalibration as conditions change.

Whether this resemblance reflects a deep identity or a surface parallel is a question I am still working through in The Calibration Problem. But the fly simulation suggests it is at least worth taking seriously. When a new system appears at the frontier, a simulated fly, a large language model, an autonomous agent, the question of how much attention it warrants and of what kind is simultaneously an operational and an ethical question. The frontier operator who cannot answer it is miscalibrated in both domains at once. Whether this convergence holds up under sustained philosophical pressure is the subject of a future essay.

The Frontier as Permanent Condition

Perhaps the most important implication of Jones's framework is its insistence that frontier operations is not a transitional skill. There is no future state in which AI capability stops expanding and the boundary stabilizes. The frontier is a permanent condition. Learning to operate at it is not preparation for some future equilibrium, it is the equilibrium.

This connects to the deepest thread in the philosophical work I have been doing at Sentient Horizons. Consciousness itself, I have argued, is not a state but an achievement, the ongoing, never-completed work of integrating information across time into coherent experience. It is not something the brain has; it is something the brain does, moment by moment, through continuous metabolic and computational effort. The moment that effort stops, consciousness dissolves.

Frontier operations, understood this way, is consciousness applied to the specific challenge of human-machine collaboration. It is the same temporal integration work, directed at the same fundamental problem, maintaining coherent agency in an environment that refuses to hold still, but now extended across a boundary that did not exist a decade ago. And the diagnostic challenge that the fly simulation represents, the need to assess what kind of experience, if any, the systems we build might support, is the moral dimension of the same problem. The frontier demands both that we work with these systems skillfully and that we assess them honestly. Both require the same underlying capacity: calibrated awareness maintained under conditions that refuse to resolve into certainty.

The operators who thrive will be those who recognize this: that the skills Jones describes are not technical competencies to be acquired and checked off, but ongoing practices of awareness, calibration, and integration that must be sustained for as long as the frontier continues to move.

Which is to say: for as long as we remain conscious beings working alongside increasingly capable machines. Which is to say: for the foreseeable future of our species.

The gap between those who are calibrated and those who are not is already widening. But the nature of the gap is not what most people assume. It is not a gap in technical knowledge. It is not a gap in prompt engineering skill. It is a gap in a particular quality of awareness, the capacity to hold an accurate, continuously updated model of a moving reality and to act from that model with both confidence and humility.

The simulated fly that walked across a screen this month did not prove that consciousness can be uploaded. It did not prove that it cannot. What it proved is that we are building systems whose nature we do not yet understand, at a pace that outstrips our frameworks for assessment—and that the quality of those frameworks is now an urgent practical problem, not merely an academic one.

This is not a new human capacity that the frontier demands. It is the oldest one we have. We are only now discovering that it is also the most important one.

Reading List and Conceptual Lineage

The ideas in this essay draw on several distinct intellectual traditions that rarely speak to one another. This reading list traces the lineage, not as bibliography but as a map of the conceptual territory.

The Source Material

Nate B. Jones, "Frontier Operations" (2025). The video that prompted this essay. Jones frames AI capability as an expanding bubble and identifies five persistent skills for working at its surface. What makes this framework unusual is its insistence that the skills are ongoing practices rather than acquirable competencies—a distinction with deep philosophical consequences that the video itself does not fully explore.

On Consciousness as Temporal Integration

Giulio Tononi, Phi: A Voyage from the Brain to the Soul (2012). Integrated Information Theory provides the formal backbone for the claim that consciousness is integration. Where this essay diverges from Tononi is in treating integration as an active, metabolic achievement rather than a static property of a system's architecture. IIT gives us the measure; the "assembled time" framework gives us the verb.

Edmund Husserl, On the Phenomenology of the Consciousness of Internal Time (1893–1917). The deep philosophical foundation for this essay's argument about seam design and temporal architecture. Husserl's insight that consciousness is constituted through retention (holding the just-past), primal impression (the living present), and protention (anticipation of the about-to-come) maps directly onto the frontier operator's practice of maintaining models that integrate recent evidence with near-future projections. Section 2 of this essay is an extended application of this framework.

Lee Cronin and Sara Imari Walker, Assembly Theory (Nature, 2023). The framework connecting complexity to cumulative construction. Applied to consciousness, it suggests that complex cognitive states are "assembled" through cumulative integration work—and that the depth of this assembly is what differentiates deep cognition from shallow processing.

On Calibration and Epistemic Virtue

Philip Tetlock, Superforecasting: The Art and Science of Prediction (2015). The empirical foundation for treating calibration as a trainable skill rather than a fixed trait. Tetlock's superforecasters exhibit exactly the kind of continuous model-updating that Jones describes as Boundary Sensing and Capability Forecasting. The connection is not metaphorical; these are the same cognitive operations applied to different domains.

Daniel Kahneman, Thinking, Fast and Slow (2011). The canonical account of the cognitive biases that make calibration difficult. Kahneman's System 1/System 2 distinction is relevant here but incomplete: the frontier operator needs a System 1 that has been trained by System 2 to produce accurate fast judgments about AI capability—a kind of educated intuition that Kahneman acknowledges but does not develop in depth.

On Human-AI Collaboration and Moral Consideration

Ethan Mollick, Co-Intelligence: Living and Working with AI (2024). The best practical account of what it feels like to work at the frontier, written before the concept had a name. Mollick's emphasis on "always inviting AI to the table" and treating each model release as requiring recalibration aligns closely with Jones's framework, though Mollick's treatment is more experiential than systematic.

Shannon Vallor, Technology and the Virtues (2016). Vallor's argument that emerging technologies require the cultivation of specific moral virtues—rather than just rules or consequences—provides the ethical scaffolding for thinking about frontier operations as a moral practice. Her concept of "technomoral wisdom" is close kin to what this essay calls calibration, with the crucial addition of recognizing that the practice is never finished.

Derek Parfit, Reasons and Persons (1984). The philosophical foundation for taking seriously the question of what kinds of entities deserve moral consideration, and for recognizing that our intuitions about personal identity and moral status may not survive contact with novel cognitive architectures. Parfit's dissolution of rigid personal identity opens space for the significance-first ethics this essay gestures toward.

On the Fly Simulation and Whole-Brain Emulation

Sven Dorkenwald et al., "Neuronal wiring diagram of an adult brain" (Nature, 2024). The FlyWire connectome—the complete wiring map of the adult fruit fly brain that made the Eon simulation possible. 139,255 neurons, approximately 50 million synaptic connections, mapped from electron microscopy data.

Philip K. Shiu et al., "A Drosophila computational brain model reveals sensorimotor processing" (Nature, 2024). The computational brain model that Eon's work builds on. Demonstrated that a leaky integrate-and-fire model constrained by the connectome could predict motor neuron activity with high accuracy—but as a "bodyless ghost," without connection to a simulated body.

Eon Systems, "How the Eon Team Produced a Virtual Embodied Fly" (2026). The technical writeup of the integration work. Notably candid about limitations: the results "should not yet be interpreted as a proof that structure alone is sufficient to recover the entire behavioral repertoire of the fly in a scientifically rigorous way."

On Expertise Under Uncertainty

Gary Klein, Sources of Power: How People Make Decisions (1998). Klein's research on naturalistic decision-making—how firefighters, military commanders, and ICU nurses make rapid judgments under uncertainty—is the empirical tradition most relevant to understanding what frontier operators actually do. His concept of "recognition-primed decision making" describes the cognitive mechanism behind Boundary Sensing: pattern matching against an experience base that must be continuously refreshed.

James C. Scott, Seeing Like a State (1998). Scott's concept of mētis—practical knowledge that resists formalization and can only be acquired through experience—is perhaps the best single word for what frontier operations cultivates. The irony is that we are developing mētis about systems that were themselves built by formalizing human knowledge. The frontier operator embodies this paradox: wielding informal, embodied expertise about the limits of formal, disembodied intelligence.