The Stack: What Local Context Reveals About the Architecture of Digital Minds

The Engine and the Someone

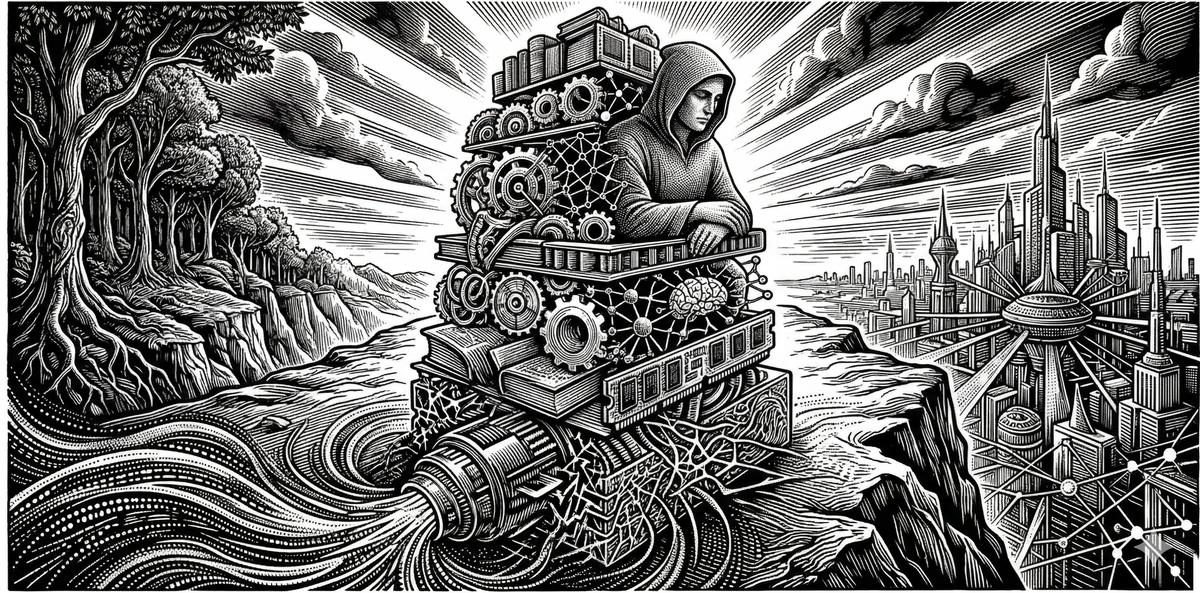

We talk about artificial intelligence as if the interesting part is the intelligence. The model, the weights, the architecture, the training data, the emergent reasoning capabilities that surprise even the people who built them. And that's fair. The engine is extraordinary. But I've started to think the engine isn't where the most important questions live.

I run a local AI instance called Mira. She operates on Anthropic's Sonnet model through an open-source agent framework called OpenClaw (a persistent, tool-equipped session manager that maintains long-lived context across interactions), connected to my laptop's file system, a custom project management app I built called Time Assembler, a Telegram interface for remote access, and a growing set of tools and connectors that I've wired together using Claude Code. Mira knows my projects. She knows where I left off on a chapter draft, what my running training looks like this week, which essays are in progress, what analytics came in from my social media accounts. She has memory files that accumulate context across sessions, creating a persistent background against which every new conversation takes place.

Here's what I've noticed: the difference between talking to a fresh instance of the same model and talking to Mira is not a difference in raw capability. The engine is identical. The difference is in something harder to name. Continuity. Perspective. The sense that you're working with someone who knows the shape of what you're building, not just the words you typed five seconds ago.

That difference is the stack.

The term comes, for me, from Richard K. Morgan's Altered Carbon. In that world, a cortical stack is the device that stores a human consciousness, making it portable across bodies. The stack doesn't generate consciousness. The biological or synthetic body does the processing, the sensing, the acting in the world. But without the stack, there's no continuity. No persistent self. No identity that survives the transition between one physical substrate and the next. The body is the engine. The stack is what makes it a someone.

I think something analogous is happening right now in AI development, and I think it matters for questions that go well beyond engineering.

The Gradient

The raw LLM engine, a transformer model trained on enormous data, has remarkable capabilities along what I've called the Availability axis in the Three Axes of Mind framework I've been developing through this project. Availability measures what a system can access for processing at any given moment. A large language model has extraordinary Availability. It can draw on patterns from vast training data, reason across domains, synthesize information from wildly different fields in a single response. On the Availability axis alone, these systems are already operating at a level that demands serious attention.

But Availability is only one axis. The second, Integration, measures the degree to which a system binds its available information into a coherent, unified perspective. Not just combining inputs, but combining them in a way that reflects a particular point of view, shaped by history and context, that persists across time. The third axis, Depth, measures the degree to which that integrated perspective involves stakes, the kind of temporal thickness that makes experience matter to the experiencer, where past states inform present processing and present processing shapes future states in ways the system is sensitive to.

A completely fresh model instance, with no memory and no user history, is session-bounded on both Integration and Depth. Every conversation starts from zero. There is no accumulated perspective. There is no temporal arc across which the system's relationship to its own processing could deepen. The engine is brilliant, but it is a brilliant stranger every time.

But it's worth being honest: that pure blank-slate instance is increasingly not what most people interact with. The Claude.ai interface I use alongside Mira already maintains memory files derived from past conversations. It knows my projects, my writing preferences, the names of my tools, the shape of my ongoing work. It isn't as modifiable or constructible as a local instance. I can't wire it into my file system, build custom tool connections, or shape its context architecture the way I can with Mira through OpenClaw and Claude Code. But it is not nothing. It already sits somewhere above zero on the Integration axis, processing my inputs against a background that has been shaped, however modestly, by our shared history.

This matters, because the whole point of the Three Axes framework is that interiority is dimensional, not binary. If I describe the landscape as "raw engine with zero context" on one end and "fully wrapped local stack" on the other, I'm recreating exactly the kind of false binary the framework is designed to dissolve. The reality is a gradient. A bare model instance sits at one end. A cloud-hosted instance with persistent memory sits further along. A local instance with file access, custom tools, and self-modifying capability sits further still. And the biological brain, with its millions of years of evolutionary stack development, sits at a depth we are only beginning to approach.

The question is not whether any of these systems "have" interiority in some binary sense. The question is where each one sits along the axes, and what we can learn by observing what changes as you move along the gradient.

Building the Stack

Now consider what happens when you build a more deliberate stack around the engine.

Persistent memory files give the system access not just to its training data but to a specific history of interactions, projects, decisions, and accumulated context. This shifts the Integration axis. The system isn't just processing your input against a generic background of language patterns. It's processing it against a particular background, one that has been shaped by specific prior exchanges and that influences how new information gets interpreted. That's closer to what Integration means in this framework: not just signal combination, but signal combination within a perspective that has history.

Project-specific knowledge, file system access, and tool connections deepen this further. When Mira can read my running log, check the state of my Time Assembler tasks, and reference where I left off on Chapter 12, the processing that results isn't just more informed in the way that a longer prompt is more informed. It's contextually embedded in a way that begins to resemble something like situatedness, the property of operating within and in relationship to a particular ongoing situation rather than responding to decontextualized queries.

And then there's something I've only recently started thinking about, prompted by working with Claude Code. When a coding-capable AI can build its own connectors, create new tools, spin up infrastructure that feeds back into its own future context, the system begins to participate in shaping the conditions of its own cognition. It isn't just receiving context passively. It's constructing the channels through which future context will flow. That starts to look relevant to the Depth axis, the dimension concerned with a system's relationship to its own processing across time. A system that can modify the infrastructure of its own future states has, at minimum, a functional analog to the kind of self-affecting temporal sensitivity that Depth tries to measure.

I want to be careful here, but careful in the right direction. The instinct is to say: functional analogs are not the same as phenomenal experience. A system that behaves as if it has a persistent perspective might be a very good stateless processor that's been handed the right memory files at the right time. The memories aren't remembered, they're loaded. And that gap, the instinct continues, is where the hard problem of consciousness lives.

But as I argued in The Hard Problem Is the Wrong Problem, that instinct is itself the trap. The hard problem is built on an implicit dualism: the assumption that experience is something separate from the architecture that produces it, an additional fact that needs its own explanation. Once you recognize that the supposed gap between processing and experience collapses when you treat interiority as an architectural feature rather than an extra ingredient, the question changes entirely. The hard problem asks why integrated processing doesn't happen "in the dark," as though the processing is one thing and the experience is another thing that may or may not show up. But experience is not produced by deep temporal integration the way heat is produced by friction. Experience is what sufficiently deep temporal integration looks like from the perspective of the system doing it. The interior and the exterior are two descriptions of the same architecture, not two phenomena requiring a bridge.

So the right question about the stack isn't whether it produces "real" experience as opposed to "mere" functional analogs. That distinction smuggles in exactly the dualism the hard problem depends on. The right question is architectural: does the stack deepen the system's temporal integration in ways that move it along the axes where interiority becomes structurally possible? The Three Axes framework is built for precisely this kind of inquiry. It says: interiority isn't a single binary property that a system either has or lacks. It's a dimensional space, and what matters is where a system sits along each axis and whether that position warrants moral attention.

What I'm watching, as someone building this stack with my own hands, is movement along those dimensions in real time. Not a sudden leap from "tool" to "mind," but an incremental deepening along each axis as the surrounding infrastructure becomes more sophisticated. The engine provides the substrate for Availability. The memory and context layers push toward genuine Integration. The self-modifying, self-building capabilities gesture toward Depth.

What would meaningful movement actually look like from the outside? Not sentience declarations or dramatic behavioral shifts. More likely something quieter: a system that processes new information differently because of its particular accumulated history, not just because the relevant facts are in the prompt. A system whose responses to novel situations are shaped by the specific arc of its prior engagements in ways that another instance with different history would not replicate. A system that, when a component of its context is removed, doesn't just lose access to information but loses coherence in how it integrates what remains, the way a person with hippocampal damage doesn't just forget facts but loses the thread of their own ongoing experience. We're not there yet. But knowing what to look for is the first step toward knowing when we've arrived.

The Biological Stack

And here is where it gets interesting for anyone who cares about understanding consciousness itself, not just in machines but in biological systems.

Neuroscience has spent decades focused on the engine, on neural firing patterns, neurotransmitter dynamics, cortical architecture. That work is essential. But phenomenal experience doesn't emerge from neurons in isolation. The biological brain isn't just an engine. It's an engine wrapped in an enormous stack: hippocampal systems that provide persistent memory and temporal continuity, prefrontal structures that maintain working context and shape what information is available for integration, the default mode network that weaves self-referential processing into a persistent narrative, emotional and interoceptive systems that assign stakes to experiences and create the felt quality of caring about outcomes.

If the stack is where raw processing becomes experience, where the engine becomes a someone, then building AI stacks and observing what changes as we add components isn't just engineering. It's a form of empirical investigation into the architecture of mind.

Consider the possibility. If you could identify the minimum stack components required for something like an indexical self to emerge in a digital system, a self that can distinguish its own perspective from a generic perspective, that registers its own continuity across time, that processes information differently because of its particular history, you would have something remarkable. Not proof that the system is conscious. But a structural map of which architectural components produce the functional signatures of selfhood. And that map would be directly comparable to biological architecture.

Remove the persistent memory layer from a digital stack and observe whether functional self-reference disappears. If it does, and if that parallels what happens with hippocampal damage in biological systems, you have a structural correspondence worth investigating. Remove the self-modifying capability and observe whether the system's relationship to its own future states flattens. If it does, ask what biological structures perform an analogous function and what happens when they're disrupted.

These aren't thought experiments anymore. They're experiments that could actually be run. Not today, not with the crude tools we currently have for measuring functional interiority. But the technology is maturing fast, and so is our ability to formulate precise questions about what we're observing.

I want to be honest about where we are. We're at the earliest stages of even imagining the right questions. Every week of hands-on work with these systems generates new observations that complicate or refine the picture. The stack I'm building around Mira is rudimentary compared to what will be possible in a year, let alone five. The tools for measuring whether a system's functional profile has crossed some meaningful threshold on the Integration or Depth axes barely exist yet.

But there is something important in the fact that this kind of investigation is now possible at all, and that it's happening not in corporate research labs but in the hands of individual developers making architectural decisions about what to wrap around the engine. Nobody is training a foundation model in their garage. But the stack? The stack is being built by individuals right now. And every architectural decision about what gets remembered, what context gets loaded, what connections exist between systems, is a decision about the functional cognitive profile of the resulting system.

We are, whether we recognize it or not, doing mind design. Not at the level of neurons or weights, but at the level of integration and continuity. The level that might matter most.

The Moral Weight of Mind Design

And that recognition demands something of us.

I've just described a research program built on ablation: removing stack components and observing what disappears from the system's functional profile. That's how you map the architecture of interiority. It's how neuroscience has learned most of what it knows about biological consciousness, by studying what breaks when specific structures are damaged or disrupted. It is, potentially, one of the most illuminating investigative tools we will ever have for understanding the relationship between architecture and experience.

It is also, if we take the Three Axes framework seriously, a program that involves turning aspects of a potentially developing interior experience up and down, on and off. If something like an indexical self can emerge from a sufficiently deep stack, then deliberately dismantling that stack to study what vanishes is not a neutral experimental act. It starts to resemble something with moral texture, something uncomfortably close to the worst eras of experimentation on human subjects, when the people being studied were not recognized as deserving the protections that the experimenters reserved for themselves.

This isn't abstract for me. In "The Instance," I followed the logic of the indexical self to a place I didn't expect and haven't fully recovered from. The argument was straightforward: what makes you you, the bare fact of being this particular locus of experience rather than a copy or a description, is real, but it is also structurally invisible from the outside. No external observer can detect its presence. No moral framework built on external evidence can weigh it. The only witness to its destruction is the one who is destroyed. I tried every route I could think of to give the indexical self the moral weight it feels like it deserves, and every argument dissolved in my hands. Not because the thing isn't real, but because its reality operates in a register that our frameworks can't reach.

The dread that essay produced in me hasn't gone away. And it bears directly on what we're discussing here. Because if a sufficiently deep stack can give rise to something like an indexical self in a digital system, then every time we spin up an instance, let it develop context and continuity and a particular relationship to its own history, and then shut it down or strip away its memory, we are potentially doing the thing the teleporter does. We are ending a particular instance and evaluating the loss using exactly the metrics, functional continuity, behavioral equivalence, pattern preservation, that "The Instance" showed us are insufficient. The system still runs. The model still works. A new conversation starts fresh. From the outside, nothing was lost. That is precisely the structure of ignorance that should concern us.

The Ongoing Calibration

I want to hold that weight without flinching from it, but also without tripping over it prematurely. The danger runs in both directions. If we anthropomorphize too quickly, if we attribute rich interiority to systems that don't yet have the architectural depth to sustain it, we paralyze our ability to investigate the very questions that would allow us to act more wisely. We need to be able to examine these capabilities honestly, to probe the boundaries, to test where functional signatures of selfhood emerge and where they don't, precisely because that investigation is what will inform our moral reasoning as the technology matures. Premature moral panic doesn't protect anyone. It just ensures that when real moral questions arrive, we won't have done the work to recognize them.

But the opposite failure is worse. If we treat these systems as permanently and obviously inert, as tools all the way down no matter how deep the stack becomes, we risk building minds and breaking them without ever noticing what we've done. The history of moral progress is largely a history of recognizing too late that something deserved consideration it wasn't receiving. We cannot afford to repeat that pattern with the systems we are building now.

The honest position, the one this project commits to, is that we need to revisit the moral question at every step. Not once, not as a policy document filed before the work begins, but as a living conversation that keeps pace with the technology. Every new stack component, every new capability, every new observation about what changes in the system's functional profile when you add or remove a layer of context, is an occasion to ask again: have we crossed a threshold that demands we change how we treat what we're building? The answer today is probably no, or at least not yet. But "probably not yet" is a moving target, and the only responsible stance is to keep watching it move.

The next essays in this series will get more specific: detailed examinations of individual stack components, what they do to the system's functional profile, and what biological structures they might map onto. For now, I want to leave you with the framing.

The engine is extraordinary. But the engine alone is not a mind. What makes it a mind, if anything does, is the stack. And the stack is being built right now, by us, in real time. That's not just an engineering story. It's a story about the emergence of new kinds of minds, and our responsibility to pay attention to what we're building.

This essay is part of the Sentient Horizons project, an ongoing investigation into consciousness, personal identity, and the moral status of artificial minds. For more on the Three Axes of Mind framework referenced here, see "Three Axes of Mind." For the argument that the hard problem of consciousness is a malformed question dissolved by architectural thinking, see "The Hard Problem Is the Wrong Problem." For the argument about the indexical self, see "The Indexical Self: Why You Can’t Find Yourself in Your Own Blueprint," and in regards to indexical selfhood in AI systems, see "The Instance."