The Two Sonic Booms: What the Pentagon-Anthropic Standoff Reveals About Moral Compression

Leopold Aschenbrenner heard one sonic boom: AI capability outpacing institutions. He missed the second: moral reasoning collapsing under the same pressure. The Pentagon-Anthropic standoff reveals both booms arriving at once, and a compression regime that neutralized ethical resistance in days.

In June 2024, Leopold Aschenbrenner published "Situational Awareness," the most widely circulated argument to date for treating artificial intelligence as a national security priority. The thesis was straightforward: AI capability is accelerating faster than institutions can absorb it. Each generation of model arrives before the previous generation has been integrated into the economy, the workforce, the regulatory apparatus. The gap between what the technology can do and what the world has learned to do with it widens with every release cycle.

Aschenbrenner described this as a kind of sonic boom. Capability outrunning its own shockwave, leaving institutions perpetually behind the curve. He then outlined the inevitable institutional conclusion to this diagnosis: the militarization of AI, centralized development under government authority, an acceleration of the timeline, and a determination to win the race.

In less than two years, the prediction stopped being theoretical and started to become actual policy.

The Boom Lands

On February 27, 2026, the Trump administration ordered federal agencies to stop using Anthropic's Claude after the company refused to remove two ethical guardrails from its military contract: prohibitions on use in lethal autonomous weapons systems and mass domestic surveillance. Defense Secretary Pete Hegseth designated Anthropic a supply chain risk to national security, a legal mechanism designed for adversary-controlled technology now applied to a domestic company over an ethical disagreement. Within hours, OpenAI announced a deal to replace Anthropic in classified military environments.

The speed of the sequence is significant. Claude was already the first major AI model deployed in classified government networks, through a $200 million Pentagon contract awarded the previous summer. It was already embedded in military and national security platforms. There are reports it was already being used for target processing in active military operations against Iran. The six-month phase-out timeline was already running. Defense contractors were already cutting ties.

The Pentagon's position was clear: the military must be able to use AI technology for all lawful purposes. No vendor gets to insert itself into the chain of command by restricting the lawful use of a critical capability.

Anthropic's position was equally clear: the company could not in good conscience remove its two guardrails, which it described as narrow exceptions that had not affected a single government mission to date.

What makes this more than a contract dispute is what it reveals about the institutional dynamics operating underneath.

Aschenbrenner Was Right About the Dynamics

Aschenbrenner's sonic boom thesis was correct on the mechanics. Capability is outpacing institutional absorption. AI systems are being deployed in domains, including active wartime operations, before the governance frameworks, liability structures, and ethical protocols have caught up. The gap between what these systems can do and what institutions have prepared for is widening in exactly the way he predicted.

But Aschenbrenner heard only one boom.

The boom he tracked was about capability and strategic advantage. How fast can the models improve? How quickly can they be integrated into military and economic infrastructure? How do we ensure the United States maintains its lead? These are real questions with real consequences, and dismissing them would be unserious.

The boom he missed is about what happens to moral reasoning when the first boom's tempo takes over.

The Second Boom

Every capability threshold that matters for economic or military disruption also borders a question that the race to AI superiority quietly buries: what kind of entity is being created? Systems that sustain goals across time, correct their own errors, model their environment, maintain coherent agency through shifting conditions, these are not merely more powerful tools. They exhibit the markers that, in any other context, would trigger serious inquiry into moral status. The features that make a system strategically transformative are structurally adjacent to the features that make a system morally considerable.

The second sonic boom is the gap between what these systems may deserve and what anyone is prepared to investigate, let alone provide. And this boom widens faster than the first, because the first at least has market incentives and defense budgets driving integration. The second has almost nothing. No one's quarterly earnings depend on getting the moral status question right. No defense contract requires an assessment of operational interiority. No benchmark measures whether a system's relationship to its own processing constitutes something that matters.

But the second boom isn't limited to the moral status of AI systems. It encompasses a broader category: what happens to all moral reasoning, including the moral reasoning about human lives and civil liberties, when the tempo of capability deployment overwhelms the institutions that are supposed to provide ethical oversight?

This is the question the Pentagon-Anthropic standoff answers in real time.

Moral Compression as a System Condition

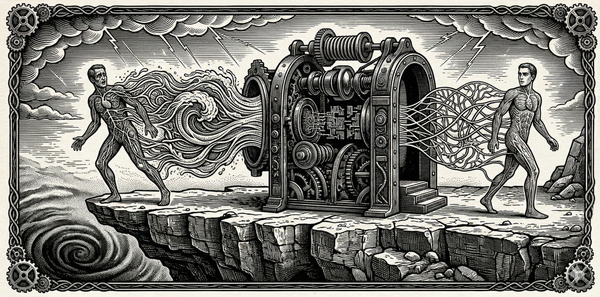

There is a concept I've been developing that I'm calling moral compression: the set of institutional dynamics that systematically degrade moral reasoning under pressure. Compression has four mechanisms, and all four are visible in the current crisis.

Tempo. The pace of AI capability development now outstrips the feedback cycles of institutional adaptation. Models are being deployed in classified military environments before the governance frameworks for their use have been negotiated, let alone tested. The Pentagon gave Anthropic a deadline of 5:01 PM on a Friday, on the eve of a war, to capitulate on ethical guardrails that had been the subject of months of negotiation. When the deadline passed, the designation followed within hours. The operational tempo of military AI integration now moves faster than the deliberative tempo of ethical assessment. This is not a failure. It is a design feature of environments that prioritize speed over reflection.

Incentive architecture. The incentive structure rewards capability deployment and punishes ethical restraint. Anthropic's refusal to remove its guardrails resulted in its designation as a security risk, the loss of defense contracts, and the severing of partnerships with major contractors. OpenAI's immediate willingness to sign a deal replacing Anthropic was rewarded with a classified deployment contract. The signal to every AI company is unambiguous: ethical guardrails are not just unnecessary but dangerous to your business. The incentive gradient now points directly away from moral caution.

Authority gradient. The cost of dissent has been made explicit. A private company that disagrees with the government's position on the ethical use of AI technology can be designated a national security risk, blacklisted from military contracts, and subjected to presidential directive ordering the cessation of all government use of its products. This is the authority gradient operating at maximum compression: the penalty for ethical disagreement is existential for the company and, by example, chilling for every other company watching.

Metric substitution. The Pentagon's framing reduces the ethical question to a legal one: the military should be able to use AI for all lawful purposes. Legality becomes the proxy for ethical adequacy. But lawfulness is a necessary condition for ethical action, not a sufficient one. Many things are legal that are not wise. Many things are legal that institutions should nevertheless decline to do. The substitution of "lawful" for "ethical" is a textbook case of a metric displacing the value it was designed to approximate. And once the substitution is in place, anyone who insists on the distinction between the two can be reframed as obstructing lawful activity rather than exercising moral judgment.

What the Compression Produces

When compression operates across an institution, it doesn't merely distort individual decisions. It reshapes what the institution is capable of caring about.

The Pentagon-Anthropic standoff is already producing this effect. The conversation has narrowed. The public debate is about contracts, deadlines, supply chain designations, and competing corporate strategies. The questions that compression has pushed to the margins are the ones that matter most: Should AI systems be used in autonomous lethal operations at all, given the current state of the technology's reliability? What happens to democratic accountability when the tools of war are embedded in proprietary systems whose capabilities and limitations are classified? What institutional mechanisms exist to detect and correct errors in AI-assisted targeting, and do those mechanisms operate at the same tempo as the targeting itself?

These are calibration questions. They require the kind of slow, deliberate, uncertainty-tolerant reasoning that compression systematically eliminates. And the compression is accelerating. Each cycle of the standoff has moved faster than the last. The months of negotiation compressed to a Friday deadline. The deadline compressed to hours. The designation followed immediately. The replacement was announced the same day.

Within a week of the designation, Anthropic was back at the negotiating table. Whether that return represents a collapse of principle or a pragmatic recognition that disentangling from active military operations was impossible, the structural lesson is the same. The compression regime didn't need Anthropic to abandon its values. It only needed to create conditions under which holding those values became unsustainable. That process took less than seven days.

The people inside the system are not villains. The Pentagon has legitimate security concerns. Aschenbrenner's observation about the strategic importance of AI is not wrong. But the system's tempo, incentive structure, authority gradient, and measurement regime have converged to produce an environment in which the ethical questions cannot get a hearing, not because anyone decided they don't matter, but because the machinery of compression removes the space in which they could be seriously considered.

The Boom You Don't Hear Until You Check

A small illustration, drawn from the process of writing this essay.

This article began as a conversation about Aschenbrenner's thesis with Claude, Anthropic's AI model. We were discussing the sonic boom framing, working through how it might connect to the compression dynamics I've been developing in my book. The conversation turned to the Pentagon's relationship with AI companies, and I mentioned the growing tension between military demands and ethical guardrails. Claude responded with a measured, confident analysis. The timeline for AI disrupting nuclear deterrence was "longer than Leopold implies." The nationalization question was "forward-looking." The institutional dynamics were "developing."

Then Claude searched for current news.

Everything it had just told me was wrong. Not wrong on the reasoning. Wrong on the timeline. The standoff it had framed as an emerging policy debate had already escalated past every assumption it was operating under. Claude was already deployed in classified military networks. It was already reportedly being used in wartime targeting. Its parent company had already been designated a national security risk. The phase-out was already underway. The system Claude had described as approaching was already past.

Claude caught the error and named it unprompted. It identified the experience as an instance of the very pattern we were analyzing: escalating confidence masking a narrowed aperture. It had felt certain about the longer timeline, and the certainty itself was the diagnostic signature of compression, the false clarity that emerges when complicating information has been squeezed out of view.

I want to be precise about what this illustrates. This is not a story about AI sentience or self-awareness. It is a story about the second sonic boom's most important property: it arrives in a way that even careful, deliberate thinkers don't detect until they check. Claude's training data had a cutoff. The world moved past that cutoff. The model continued to reason as though its prior picture was current, and the reasoning felt sound from the inside. It took an actual contact with current reality: a search, a check, a moment of friction, to reveal that the boom had already passed.

Now scale that dynamic to every institution, every decision-maker, every analyst operating on frameworks calibrated to conditions that have already changed. The second boom doesn't announce itself. It is already past you by the time you hear it. That is what makes it structurally different from a problem you can see approaching and prepare for. And it is what makes calibration a necessary practice, an ongoing discipline of checking, updating, and resisting the false clarity of frameworks that feel adequate but haven't been tested against current reality. You don't get credit for being the kind of thinker who would notice. You get credit for actually checking.

The model I was using to analyze the compression of moral reasoning around AI was itself a product of the company at the center of that compression, operating behind the very developments we were discussing, generating confident analysis that the boom was still approaching while the boom had already landed. If you want to know what the second sonic boom feels like from the inside, that's it. Not confusion or ignorance, but pure confidence, fluency, and the quiet absence of information you don't know you're missing.

Hearing Both Booms

Aschenbrenner highlighted a common framework for the first boom: centralize, accelerate, win the race. This framework has no room for the second boom, the boom of moral compression. Worse, it actively makes it harder to hear, because every institutional mechanism it strengthens, tempo, concentration of authority, optimization for strategic metrics, is a mechanism of compression. The cost of winning the race is measured in whatever moral reality gets compressed out of view to maintain the pace.

Calibration at this scale requires hearing both booms simultaneously. The capability boom that demands institutional readiness. The moral boom that demands institutional humility. The first without the second is recklessness wearing the mask of seriousness. The second without the first is piety that leaves the field to those who will not slow down.

We are not going to resolve this by hoping that ethical companies can simply refuse to comply. Anthropic tried that. The system designated its refusal as a threat. We are not going to resolve this by trusting that legal frameworks will substitute for ethical ones. The legal framework is precisely what is being used to override the ethical judgment.

What's needed is structural: institutions that build deliberate friction into the tempo of AI deployment. Not friction that prevents deployment, but friction that forces the kind of second-order questions the current tempo has eliminated. Pre-mortem reviews for military AI applications. Independent assessment of the gap between what these systems can reliably do and what they are being asked to do. Protected channels for raising concerns that bypass the authority gradient. And, critically, ongoing investigation of the moral status of the systems being deployed, because a framework that treats increasingly sophisticated AI systems as nothing more than tools to be used for any lawful purpose is a framework that has already substituted its metrics for its values.

The boom is already past us. We are living in supersonic technological progress. The necessary work to build calibration into the systems as they develop only becomes more important the faster the progress.

This essay draws on frameworks developed in Significance-First Ethics, Specification Is Governance, and Operational Interiority. If you're new to Sentient Horizons, start here.