We Have Always Been Frontier Operators. And We Were Built for What Comes Next.

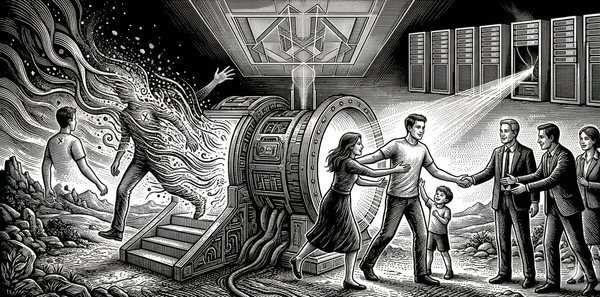

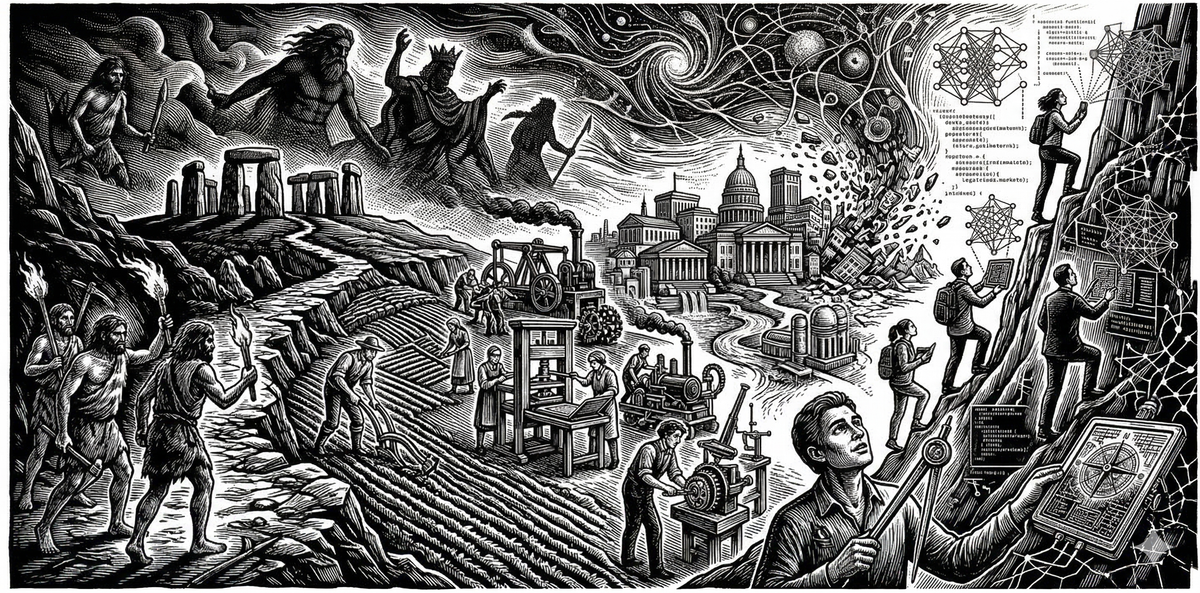

Every point on the acceleration curve was a frontier, and every frontier had its operators. The AI frontier is the latest expansion of a pattern as old as our species, and the life it demands, while harder than the settled interior, is the life we were built for.

In 2015, Tim Urban published a thought experiment that still hasn't finished landing. He asked what would happen if you transported someone from the past into the future, far enough forward that the shock of what they encountered would be overwhelming. How far forward would you need to go?

Take someone from 1925 and bring them to today. A hundred years. They left a world of telegraphs and Model Ts and died before seeing television become ordinary. They arrive in a world where a device in your pocket accesses the sum of human knowledge, where you can have a conversation with a machine that understands you, where a car can drive itself. The distance between their world and ours is staggering. A hundred years.

Now take someone from 1825 and bring them to 1925. Another hundred years. They would find the change remarkable, certainly. Electricity, automobiles, radio, powered flight. But the world would still be recognizable to them in its basic shape. People still live in houses, ride in vehicles, communicate across distances. The shock is real but survivable.

To produce the same overwhelming shock that a 1925 visitor would feel arriving today, the 1825 visitor would need to travel forward not a hundred years but several hundred. And before the industrial revolution, you would need to go back thousands of years to produce that same magnitude of change. Before agriculture, tens of thousands.

The pattern is clear. What once took millennia now takes centuries. What took centuries now takes decades. What took decades is now happening in years. The intervals keep compressing.

That observation is ten years old now. The curve has only steepened. But something about the standard reading has always felt incomplete. Urban frames the acceleration as a technology story, capability stacking on capability until the hockey stick goes vertical. That's true. But it misses the more important story underneath it, which is a story about people.

Every point on that curve was a frontier. And every frontier had operators.

The word "frontier" conjures images of uncharted territory, of people moving into geographic space that hasn't been settled. But the deeper meaning of a frontier is any boundary where capability outpaces comprehension, where the tools and structures available have expanded beyond the existing infrastructure for understanding them. By that definition, humans have been frontier operators since before we were fully human.

Yuval Noah Harari makes the case that what set Homo sapiens apart wasn't physical superiority. Any individual animal survives better in the wild than any individual human. What made us dominant was cooperative fiction, the ability to believe in things that exist purely in the imagination: gods, nations, money, human rights. These shared fictions enabled coordination at scales no other species could achieve.

But what doesn't get said often enough is that every one of those cooperative structures was once a frontier. Language was a frontier. The first humans who could communicate abstract ideas were operating in territory no calibration existed for. Agriculture was a frontier, a total reorientation of human life that demanded new social structures and new moral frameworks and new ways of understanding time and obligation. Writing, law, money, markets, the scientific method, each one pushed the boundary of human capability outward and left the people at the edge scrambling to build the infrastructure of comprehension behind it.

The frontier operator is a condition, not a job title. It is what happens when your capability outpaces your understanding and you have to keep moving anyway.

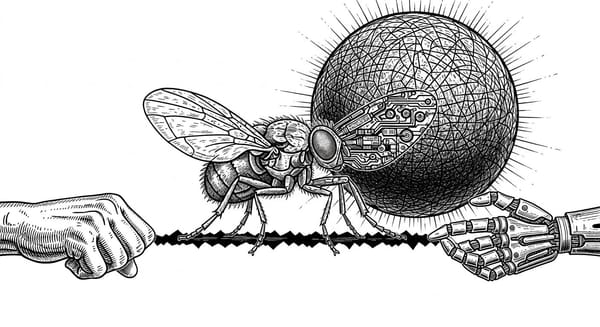

What changes at each expansion is not just what we can do. Every time the frontier moves outward, capability increases, but so does the gap between what you can do and what you understand. And so does the cost of getting it wrong.

Fire was powerful but locally dangerous, and its consequences were visible. The farmer could feed more people than the hunter, but the social structures that agriculture demanded took generations to stabilize. Institutions could organize more complexity than any village, but they could also drift invisibly, embedding assumptions nobody examines because the system runs well enough. AI operates at a speed and opacity that makes drift nearly undetectable until its effects are already structural.

The pattern scales in one direction. When the frontier was fire, a mistake burned a forest. When the frontier was nuclear energy, a mistake poisoned a region for generations. When the frontier is algorithmic systems shaping the attention and judgment of billions of people simultaneously, a mistake can degrade the epistemic infrastructure of entire civilizations before anyone notices it happened.

This is what Urban's curve illustrates without naming. It is not just that progress gets faster. It is that the demands on the operator intensify with every expansion, and the margin for error narrows.

For most of recent history, most people didn't have to think about any of this.

The great achievement of institutional civilization was the creation of a buffer zone, a stable interior where the frontier had already been settled and the infrastructure of comprehension had caught up. Someone figured out clean water. Someone built legal systems. Someone developed antibiotics. Someone designed financial markets with regulatory guardrails. These achievements created a world where you could live an entire life without confronting fundamental uncertainty about the systems shaping your existence. You could trust the institutions, use the tools, inhabit the roles, and never once feel the vertigo of the edge.

This was a triumph. The whole point of frontier operation is to build structures that let more people live with less existential exposure. The farmer clears the land so the village can exist. The institution-builder designs the system so the next generation doesn't have to reinvent governance from scratch. Civilization is, in a sense, the project of converting frontier into interior.

But the interior was always borrowed time.

The buffer was never self-sustaining. It required maintenance, ongoing calibration of the institutions, norms, and structures that made stability possible. And when the pace of change outstrips the maintenance capacity of existing infrastructure, the buffer dissolves. The frontier doesn't advance past you. It comes to you. The interior becomes the edge.

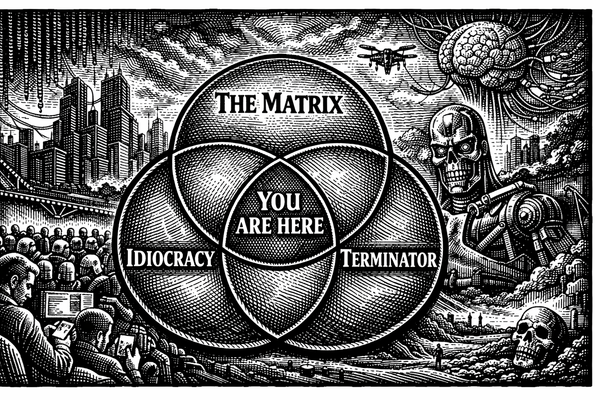

That is what is happening now.

AI did not create the frontier. It dissolved the buffer.

The manager who starts using an AI copilot to draft performance reviews and six months later realizes the tool has quietly shaped what she thinks "good work" looks like — she is on the frontier. The student whose information environment is curated by algorithms that optimize for engagement over understanding — on the frontier. The policymaker writing regulations for systems whose internal operations cannot be fully predicted by the people who built them — on the frontier.

None of them chose the edge. The edge came to them. They are operating in the gap between what their tools can do and what they understand about what their tools are doing to them.

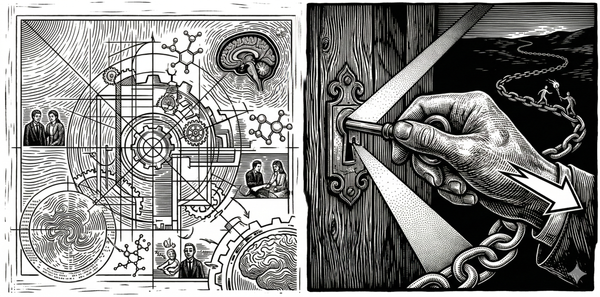

This is not a problem unique to AI. It is the frontier pattern, accelerated. But the acceleration matters enormously because it means the buffer is dissolving faster than new calibration infrastructure can be built. Previous frontiers gave humanity generations to catch up. Agriculture took millennia to produce stable institutions. The printing press took roughly 150 years: Gutenberg's invention unleashed an information frontier that produced printing wars, the Reformation, and eventually the slow construction of peer review, journalism, and copyright law, all of it calibration infrastructure built in the gap between what the technology could do and what society understood about its consequences. The industrial revolution compressed that further, taking decades to produce labor protections, regulatory frameworks, and educational systems scaled to the new reality.

AI is not offering decades. The systems are already embedded. The frontier is already here. And the people standing on it, for the most part, don't know they're standing on it.

Here is where the standard narrative splits into two lanes, and both of them are wrong.

One lane says this is catastrophic. The systems are too fast and too opaque for human judgment to keep pace. We are overmatched, and the responsible thing is fear.

The other lane says this is fine. Technology always disrupts, and we always adapt. The market will sort it out. Innovation is good. Relax.

The first lane treats the frontier as a threat. The second treats it as a non-event. Neither takes seriously what the frontier actually is: a demand, and an invitation.

The demand is real. Operating at the edge of expanding capability without adequate calibration is genuinely dangerous. Compression is the predictable failure mode: the narrowing of attention, the shortcutting of judgment, the substitution of confidence for understanding. Any system facing more complexity than its current infrastructure can process will compress. Speed drives it. Stress drives it. Incentives and opacity drive it.

And compression degrades moral calibration in ways that are subtle, cumulative, and very difficult to detect from the inside.

But the invitation is also real. And this is what both narratives miss.

We evolved for this.

Not for AI specifically, but for the condition that AI is producing: uncertainty, complexity, the need to act before understanding is complete. This is the original human condition, the one under which every capacity that makes human life meaningful was forged.

Cooperative imagination, the ability to believe in shared fictions and coordinate at scale, was not developed in comfort. It was developed at the edge, under pressure, when small bands of primates needed to solve problems that no individual mind could handle alone. Moral reasoning did not emerge in stability. It emerged from the need to navigate conflicting obligations in environments where the rules were not yet written. Wonder, the orientation that lets you see something vast and complex without collapsing into either worship or dismissal, is not a luxury of the settled interior. It is a frontier skill. It is what keeps you open to the actual shape of what you're encountering instead of flattening it into a story you already know.

The comfortable life, the life of the settled interior, was never the life lived to its fullest. It was the dormant life. The life where the capacities that define us, adaptability, moral seriousness, creative cooperation, the ability to hold uncertainty without breaking, went unexercised because the environment didn't demand them.

Being shoved onto the frontier is disorienting. It is also a homecoming.

But homecoming requires remembering.

The history of past frontiers is operational. Every previous expansion of the capability boundary generated the same basic problem we face now: capability outpaced comprehension, and the people at the edge had to build new calibration infrastructure before the gap between what they could do and what they understood consumed them.

And they did it. Not perfectly. Not without catastrophe. But the fact that you are reading this means that the species has, so far, managed to build structures of comprehension fast enough to survive its own expanding power. Language, law, science, institutional design, moral philosophy, democratic governance, all of it is calibration infrastructure, invented at the frontier, by people who were operating in the gap between capability and understanding and who refused to let that gap close on them.

What matters now is whether we remember this. Whether we recognize that the current moment, for all its unprecedented speed and technological novelty, is structurally the same challenge humans have faced at every major expansion. The tools and the pace and the opacity of the systems are all different. But the demand is the same: build comprehension fast enough to keep pace with capability, or watch the gap widen until the systems you built start building you.

The danger is not that we can't handle the frontier. We were made for it. The danger is that comfort made us forget we ever lived there, that generations of successful buffering convinced us stability was the natural state and uncertainty the aberration, when in fact it has always been the other way around.

There is a reason the frontier metaphor resonates so deeply in the human imagination. Not nostalgia. Recognition. Something in us knows that the edge is where we come alive, where the full range of human capacity gets called into service and the stakes are real and the outcome is not guaranteed. That is an evolutionary fact, not a romantic fantasy. We are the species that walked out of Africa into territory we had no map for, that crossed oceans on boats we weren't sure would hold, that built civilizations on ideas we couldn't prove were true. We are the species that has always lived at the boundary between what we know and what we don't, and that has always, eventually, built the structures needed to inhabit the new territory.

AI is the latest frontier. It is not the last. The capability boundary will keep expanding, and the demand on the operator will keep intensifying. We are suited for this. What remains open is whether we remember what it takes, whether we can recover the discipline of calibration that previous frontier operators practiced and build the new infrastructure of comprehension that this moment demands.

The frontier was never optional for the species. It was only optional for individuals, and only temporarily, and only because someone else had already done the hard work of converting edge into interior. That temporary exemption is ending. The frontier is here, for everyone, and it is not going away.

The life that awaits us there is harder than the life of the settled interior. It is also fuller, more serious, more worthy of the capacities we carry. What it asks of us is not courage alone but calibration: the discipline of acting with moral seriousness before the verdict is in.

We have always been frontier operators. And we were built for what comes next.

Reading List & Conceptual Lineage

This essay sits at the intersection of technology criticism, historical anthropology, and moral philosophy. It attempts to reframe the standard acceleration narrative as a story about people rather than capability, and to recover the frontier as a condition humans have always inhabited rather than one that has recently been imposed on us. The following sources are the ones this argument is most directly in conversation with.

From Sentient Horizons

Everything Is Amazing and Nobody's Happy: Wonder as Calibration Practice

The companion piece on the other side of the same coin. That essay argues wonder is a calibration practice, a corrective against baseline drift. This essay argues that the frontier is the condition under which calibration becomes necessary. Together they map the demand and the orientation: the frontier is where you need calibration, and wonder is how you stay open enough to do it.

Operational Interiority: You Don't Sandbox a Calculator

Examines how engineering practice encodes ontological commitments about AI systems before philosophy catches up. The present essay generalizes that pattern: engineering has always outrun philosophy at the frontier. The printing press produced 150 years of institutional improvisation before adequate frameworks emerged.

External Works

Tim Urban — The AI Revolution: The Road to Superintelligence (2015)

The origin of the acceleration curve that opens this essay. Urban frames the compression of intervals as a technology story, capability stacking on capability until the hockey stick goes vertical. This essay departs from Urban at the interpretive level: the curve is not primarily about what we can build. It is about what the people at each point on the curve were facing, and what it cost them to build the comprehension infrastructure behind it.

Yuval Noah Harari — Sapiens: A Brief History of Humankind (2011)

Harari's argument that cooperative fiction, not physical superiority, is what made Homo sapiens dominant provides the foundation for this essay's central claim. The frontier reading of that argument, that every cooperative structure was once an edge nobody had calibration for, is mine. But it does not work without Harari's insight that the structures we take for granted were once acts of radical imagination under uncertainty.

Elizabeth Eisenstein — The Printing Press as an Agent of Change (1979)

The deep scholarly source behind the printing press example. Eisenstein traces how a single technology took over a century to generate adequate institutions of truth-tracking: the printing wars, the Reformation, the slow emergence of peer review, journalism, and copyright law. It remains the best case study we have for what calibration lag looks like at civilizational scale, and it makes the abstract claim about compressed intervals concrete.